Sprint: Three.js Mastery - From Blank Canvas to Interactive 3D Worlds

Goal: Master 3D web graphics from first principles using Three.js. You will understand the complete rendering pipeline – scene graphs, cameras, materials, lighting, textures, shaders, physics, and post-processing – and build 13 progressively complex projects from a spinning cube to a full interactive 3D portfolio. By the end you will be able to architect, animate, optimize, and ship production-quality 3D experiences in any modern browser.

Introduction

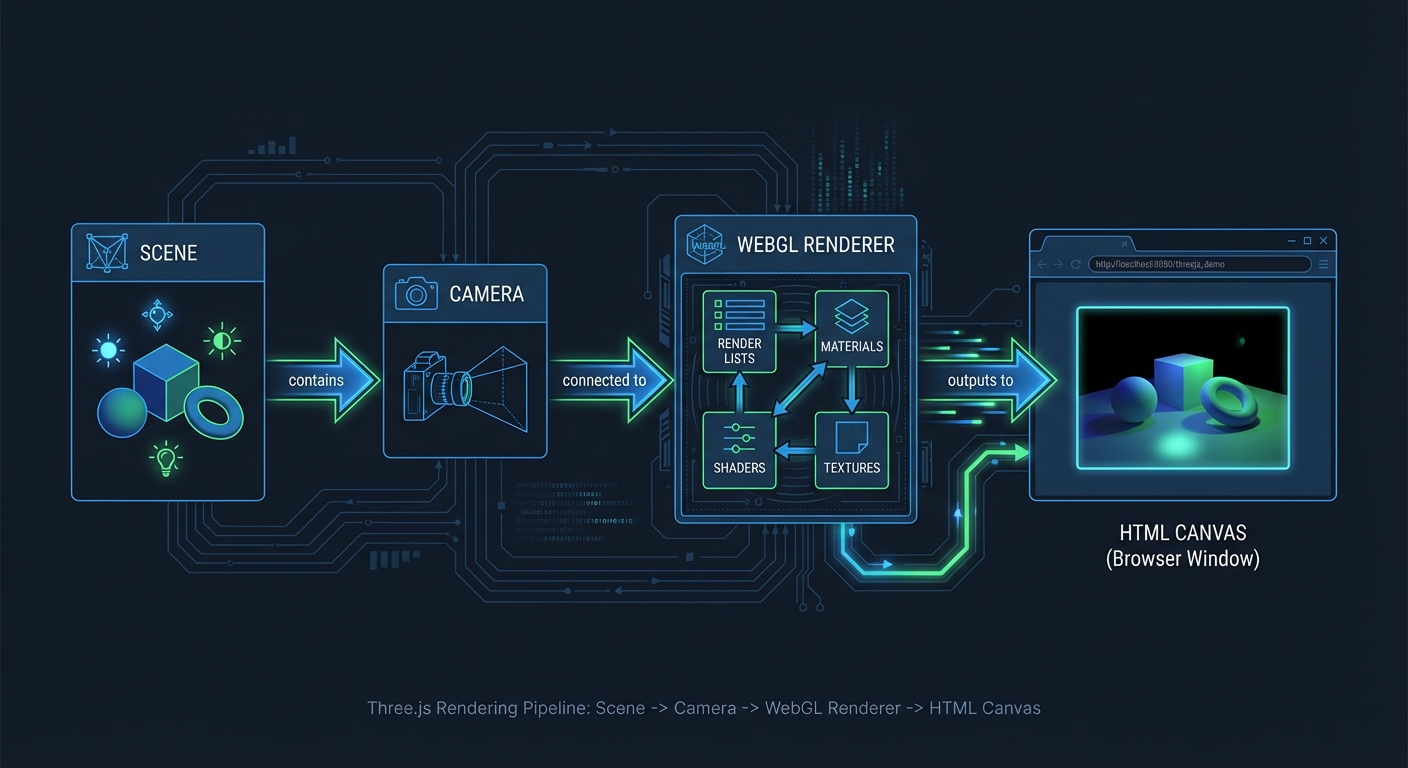

Three.js is a JavaScript library that abstracts the WebGL (and now WebGPU) graphics API into a developer-friendly scene graph. Instead of writing hundreds of lines of raw WebGL boilerplate to place a single triangle on screen, Three.js lets you describe a 3D world in terms of familiar concepts: scenes, cameras, lights, meshes, and materials. Under the hood it translates these high-level descriptions into the low-level GPU commands that WebGL and WebGPU require.

What problem does it solve? WebGL is powerful but brutally verbose. To render a textured cube with raw WebGL you must manually create shader programs, compile GLSL code, allocate GPU buffers, manage transformation matrices, and handle the render loop – easily 200+ lines of boilerplate before anything appears on screen. Three.js collapses that to roughly 15 lines of clear, readable code. It makes 3D accessible to any web developer who knows JavaScript.

Three.js was created by Ricardo Cabello (mrdoob) in 2010 and has grown to over 100,000 GitHub stars with more than 2,000 contributors. It powers 3D experiences at Apple, Nike, Google, NASA, IKEA, Spotify, and thousands of creative studios worldwide. With approximately 3-5 million weekly npm downloads as of early 2026, it is by far the most widely used 3D library for the web – roughly 270 times more downloads than its nearest competitor, Babylon.js. WebGL 2.0 is supported by 97%+ of browsers, and as of late 2025, WebGPU support has landed in all major browsers (Chrome, Firefox, Safari), giving Three.js access to next-generation GPU capabilities.

What will you build across 13 projects?

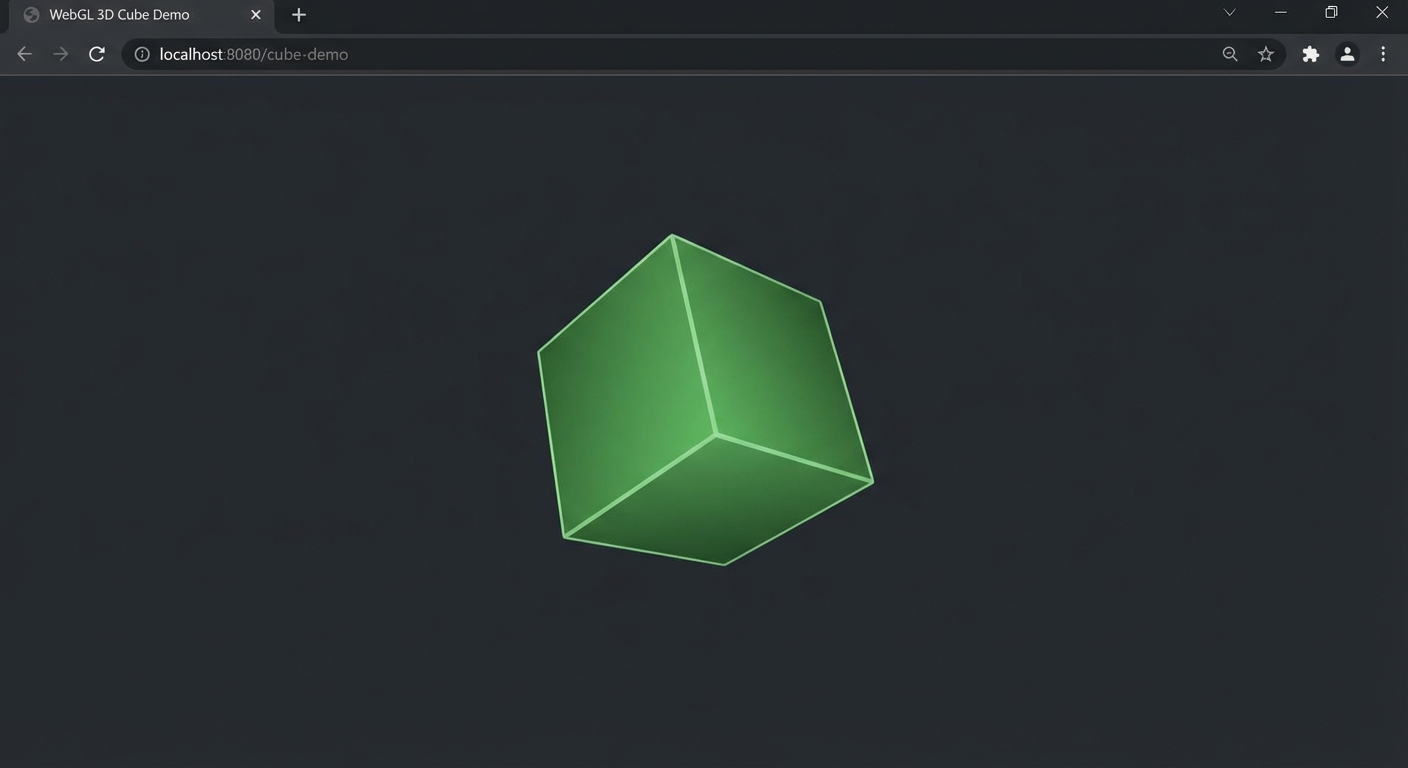

- A spinning, textured cube with orbit controls (rendering fundamentals)

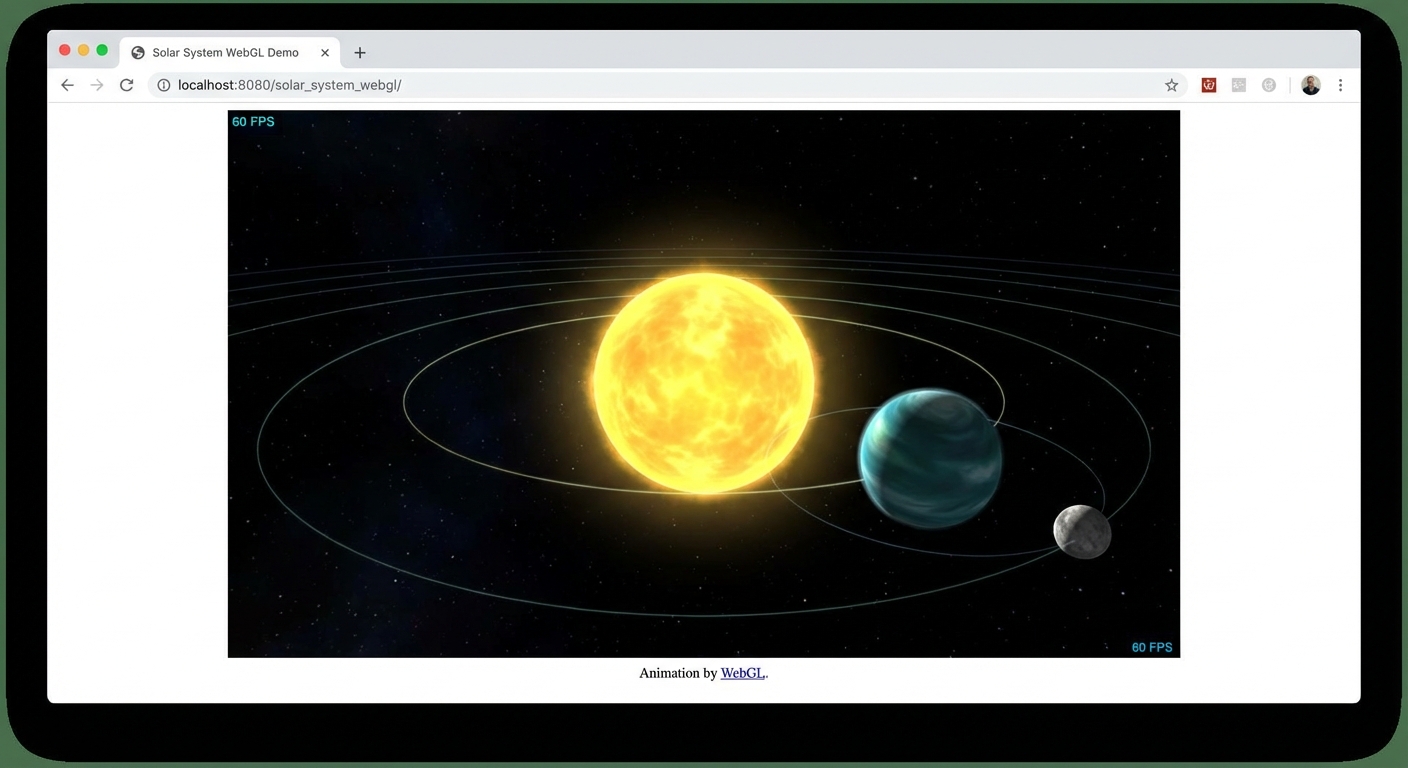

- A mini solar system with hierarchical orbits (scene graph and transformations)

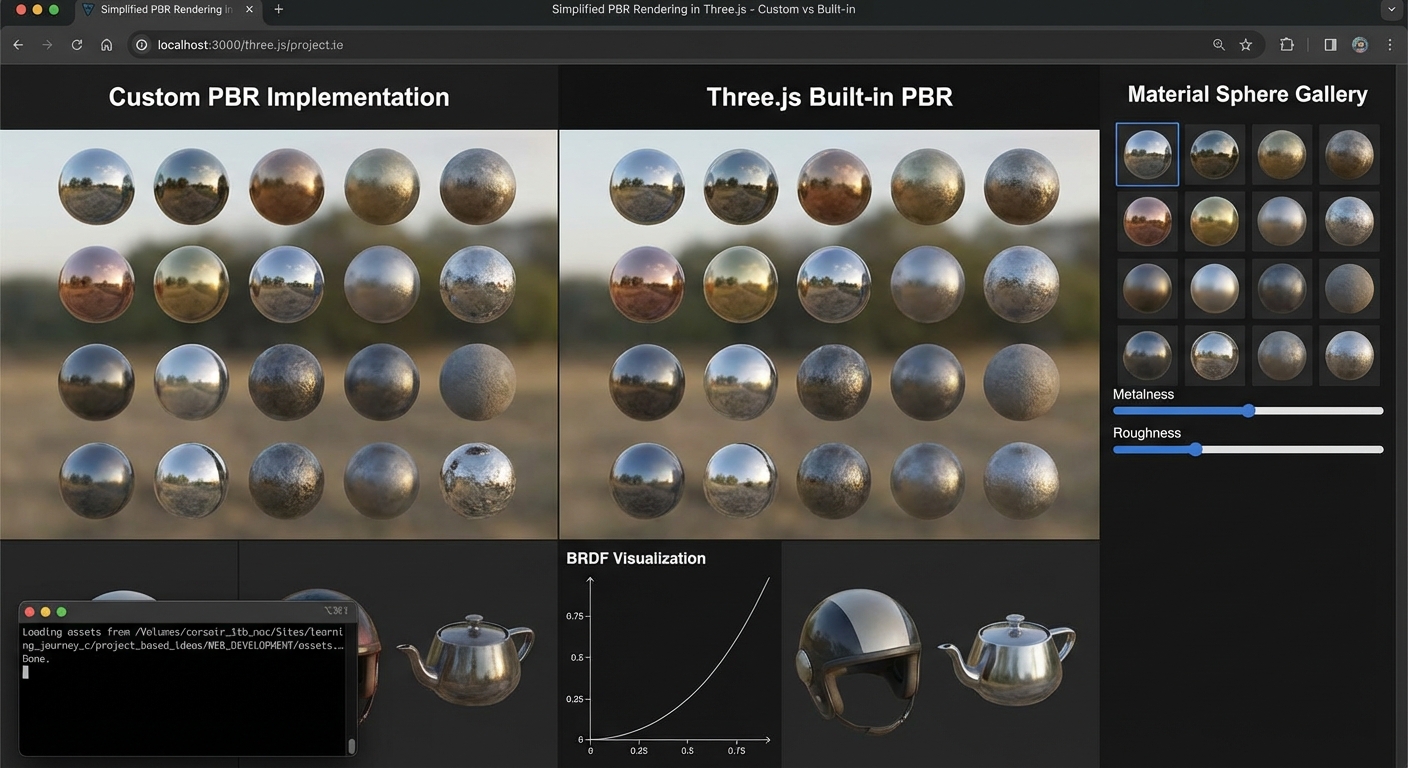

- A PBR material showcase gallery (materials and lighting)

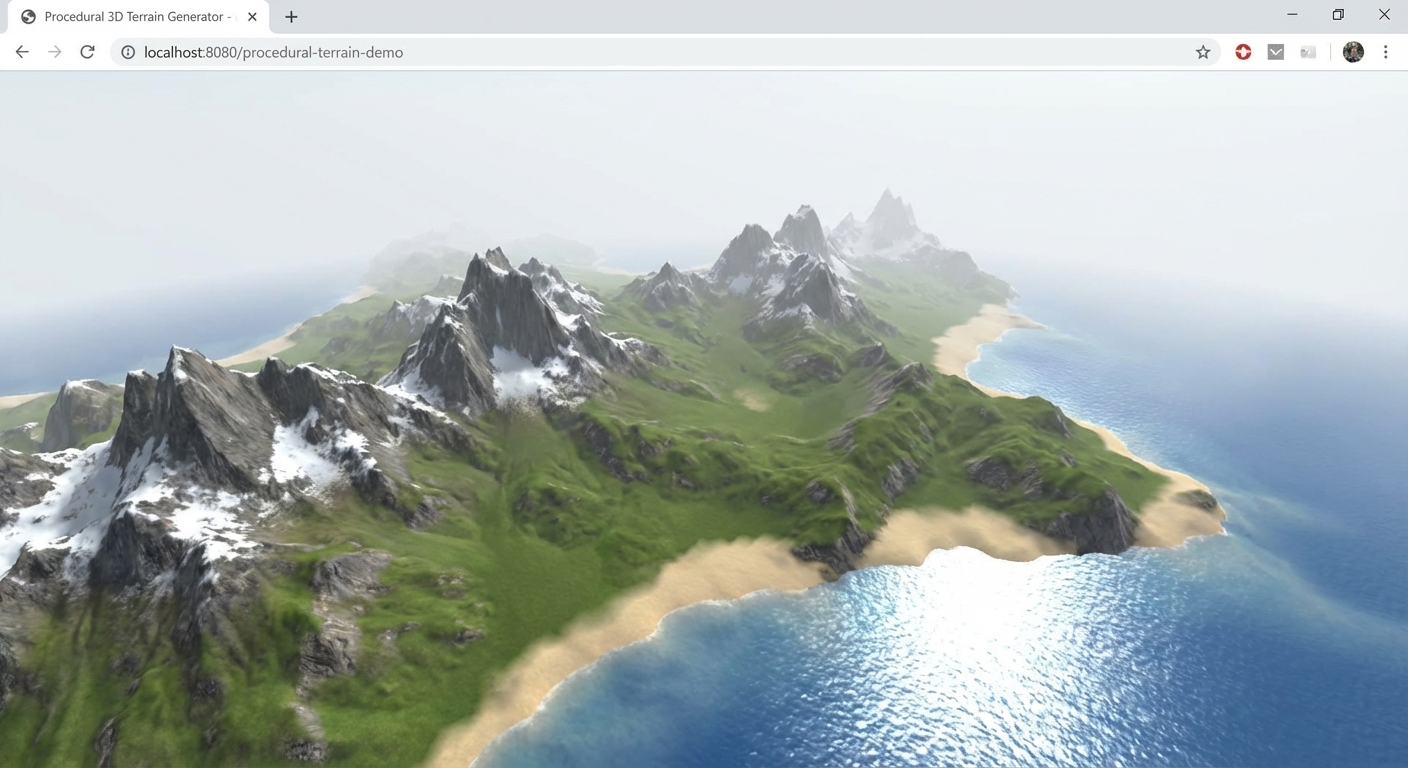

- A terrain heightmap renderer (geometry, textures, and displacement)

- A haunted house with baked shadows (lighting, shadows, and atmosphere)

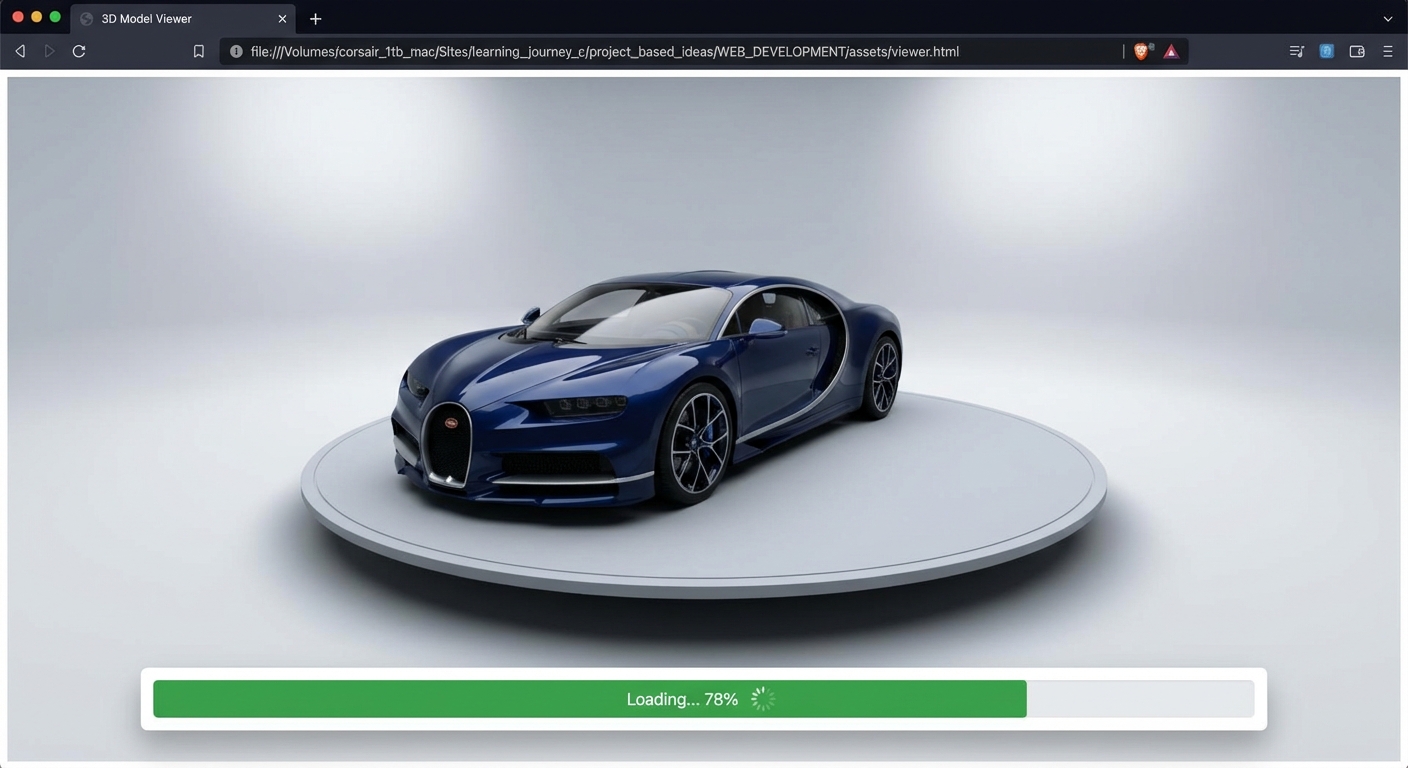

- A GLTF model viewer with animation playback (asset loading pipeline)

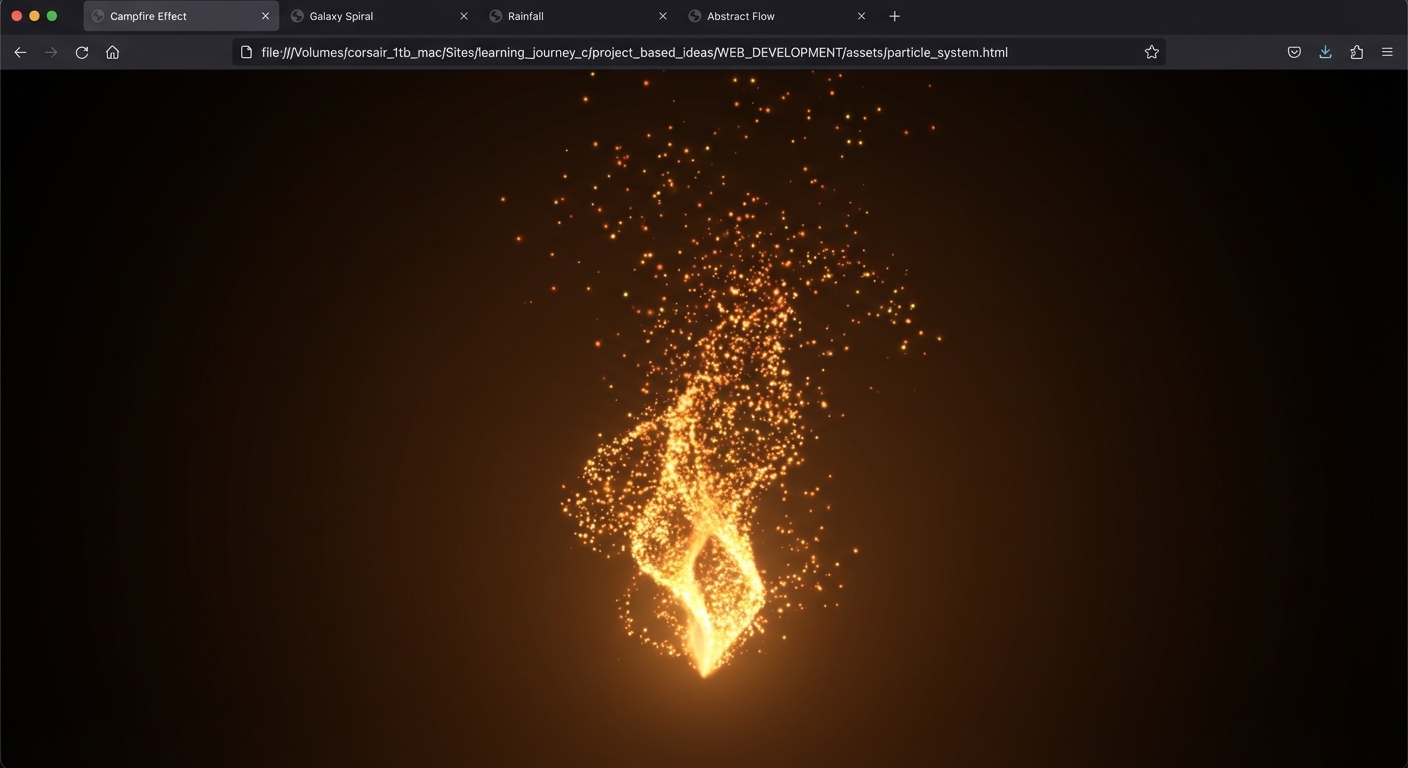

- A particle galaxy generator (particle systems and GPU animation)

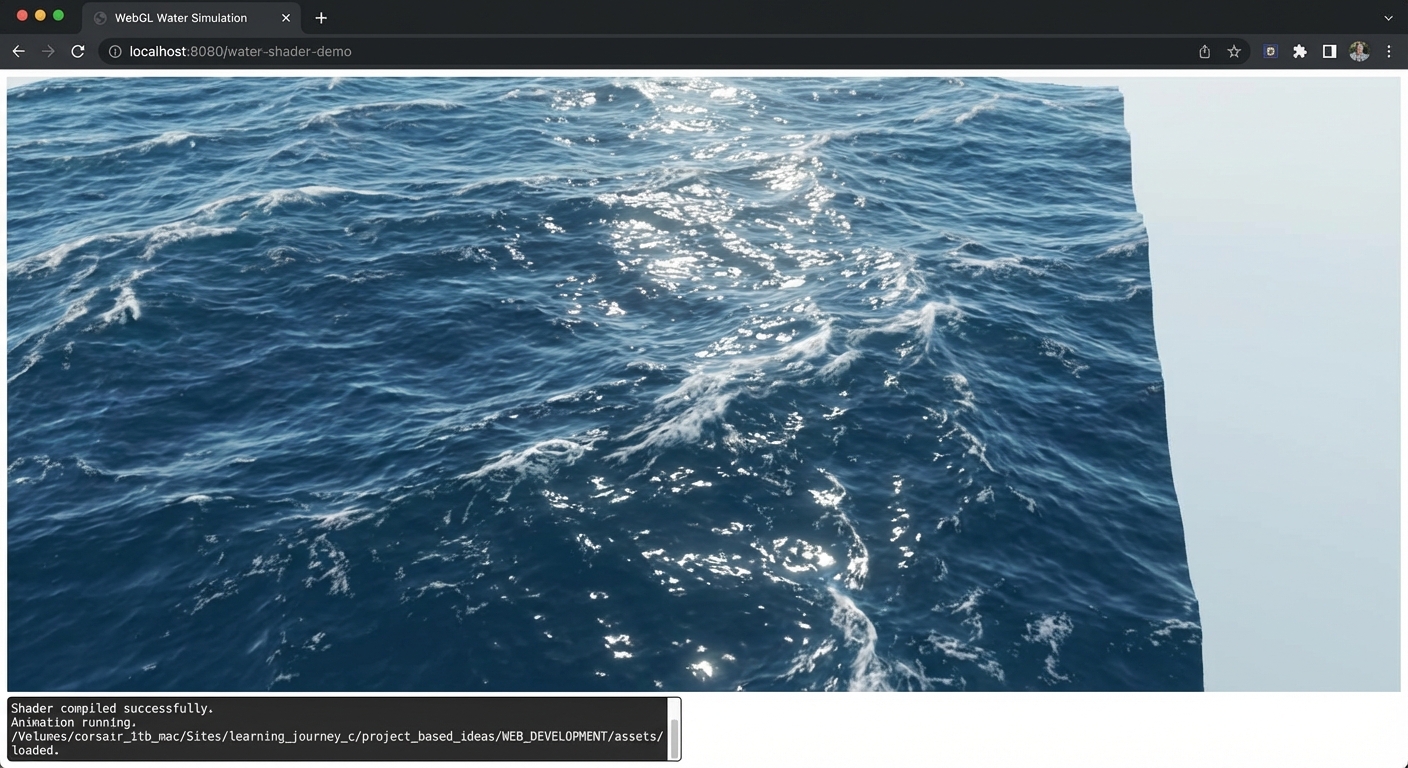

- An ocean water shader (custom GLSL vertex and fragment shaders)

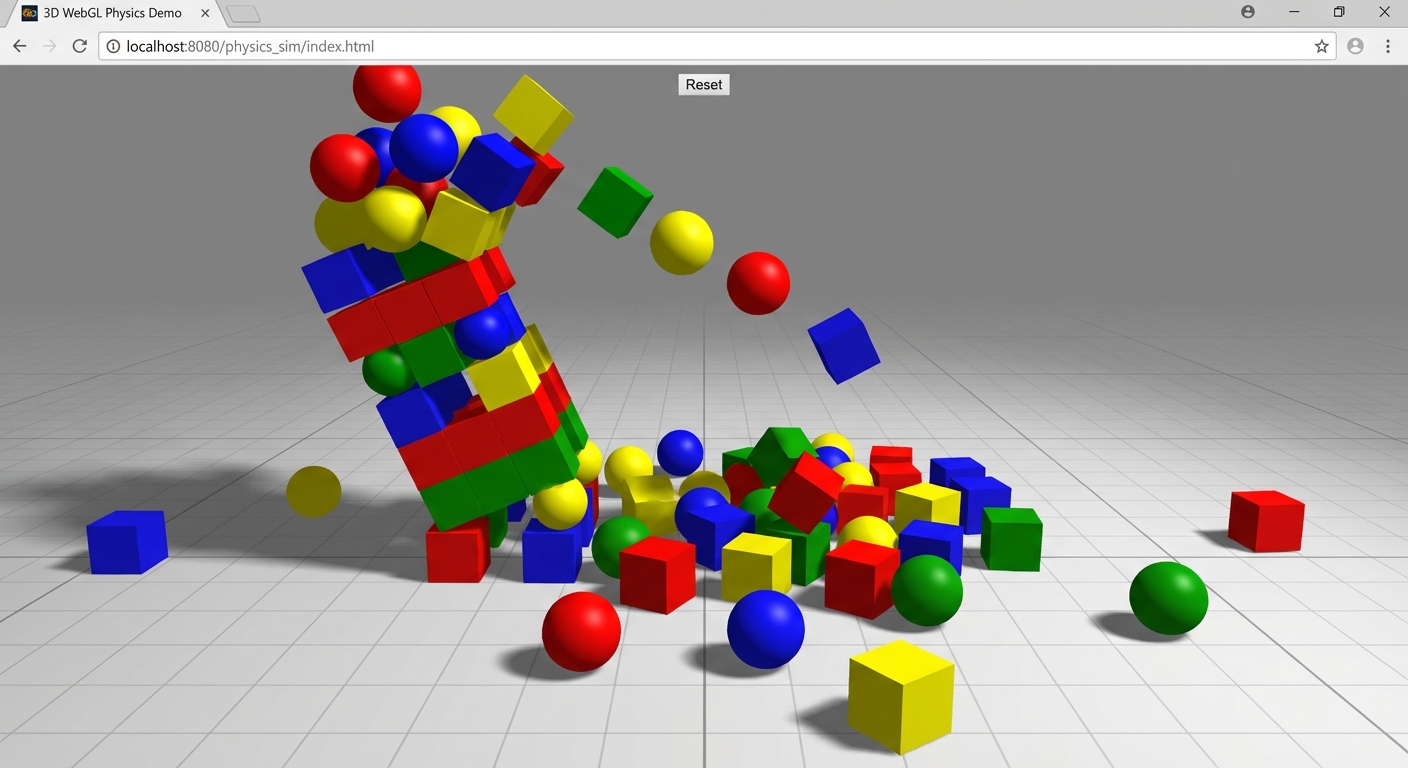

- A physics sandbox with ragdolls (physics engine integration)

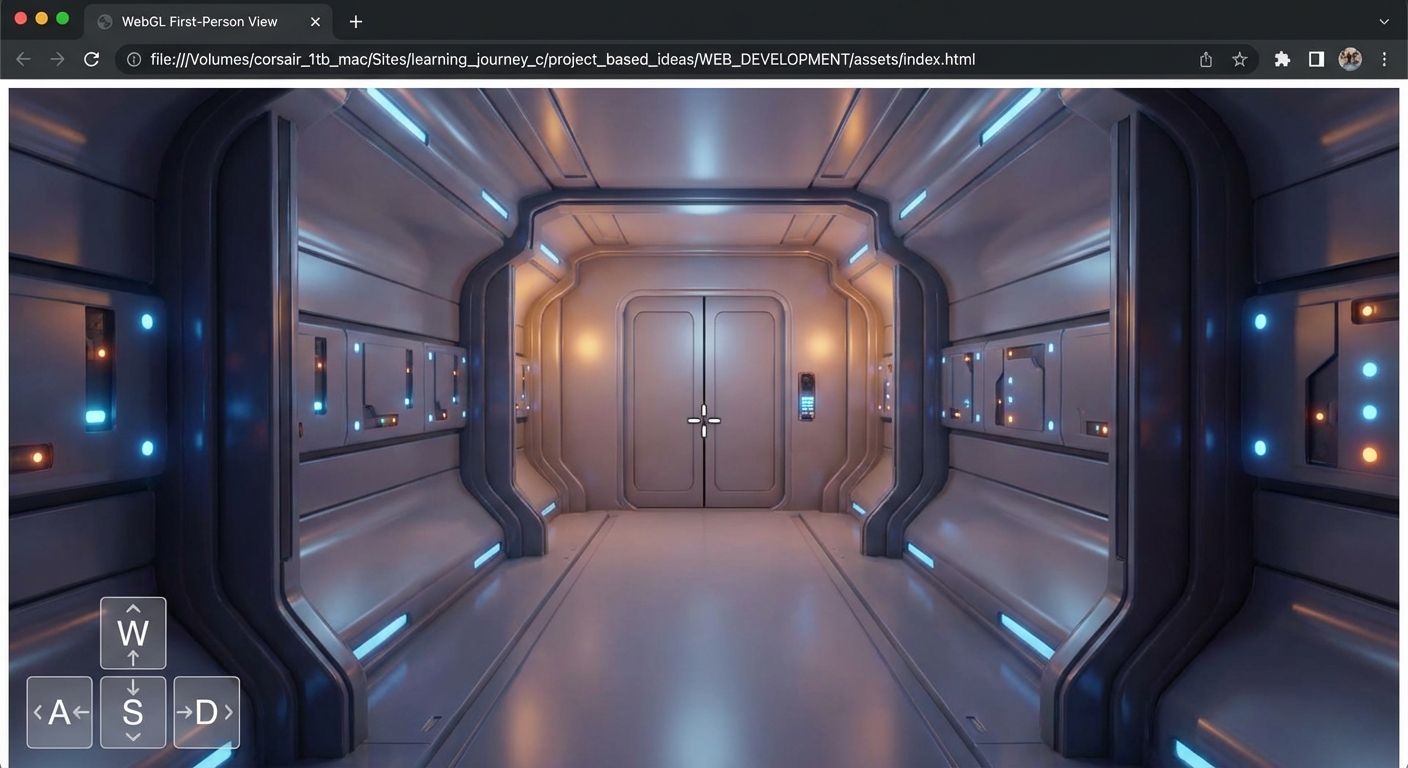

- A 3D product configurator (raycasting, interaction, and UI)

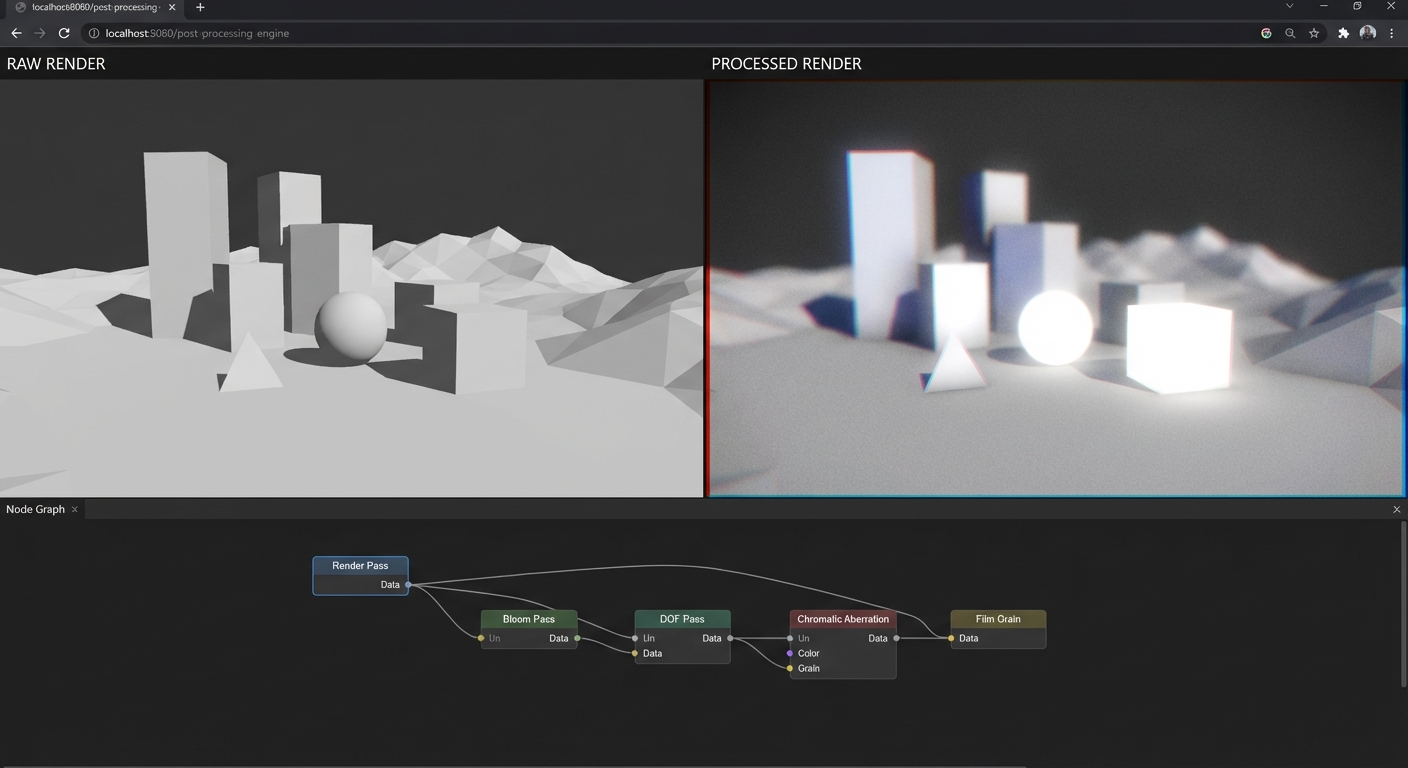

- A post-processed cinematic scene (bloom, DOF, color grading)

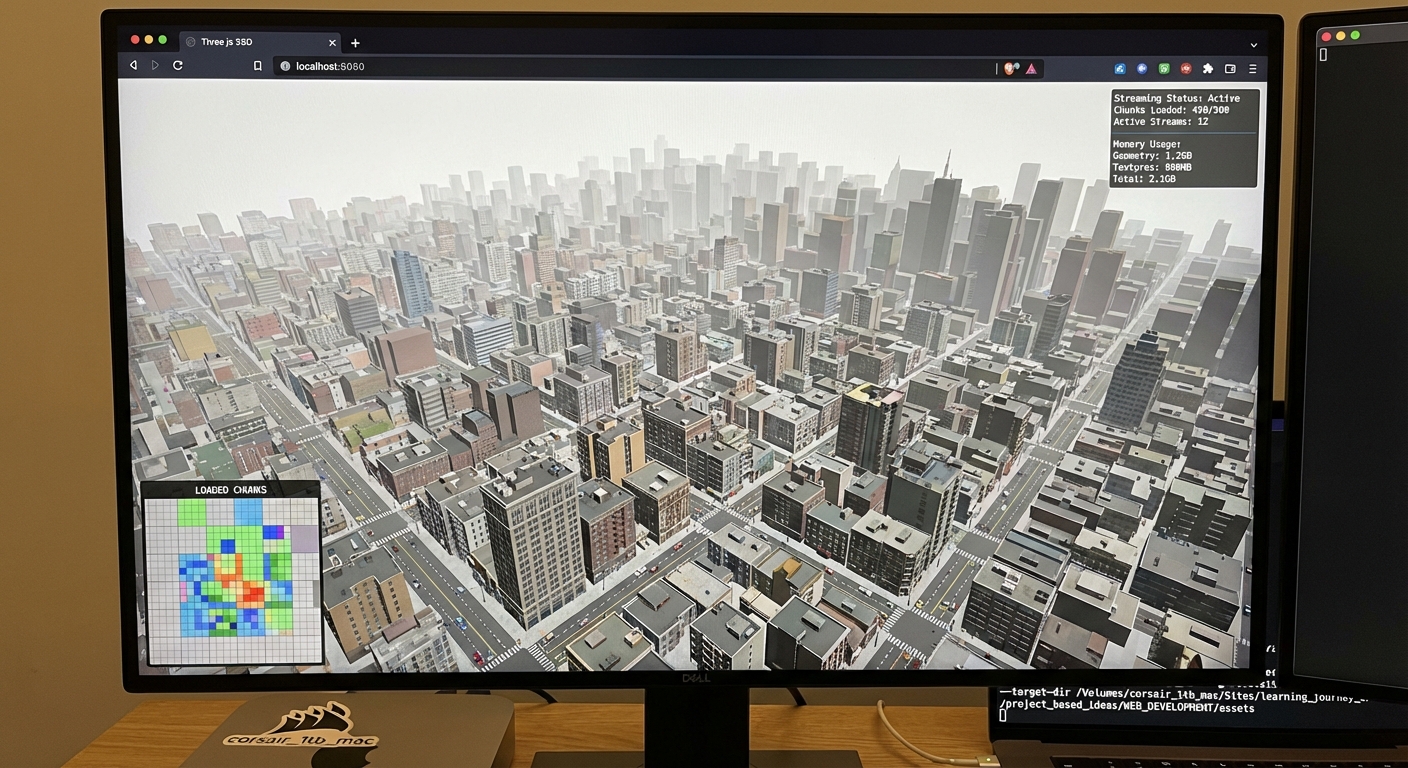

- A procedural city with 50K buildings (instancing and performance)

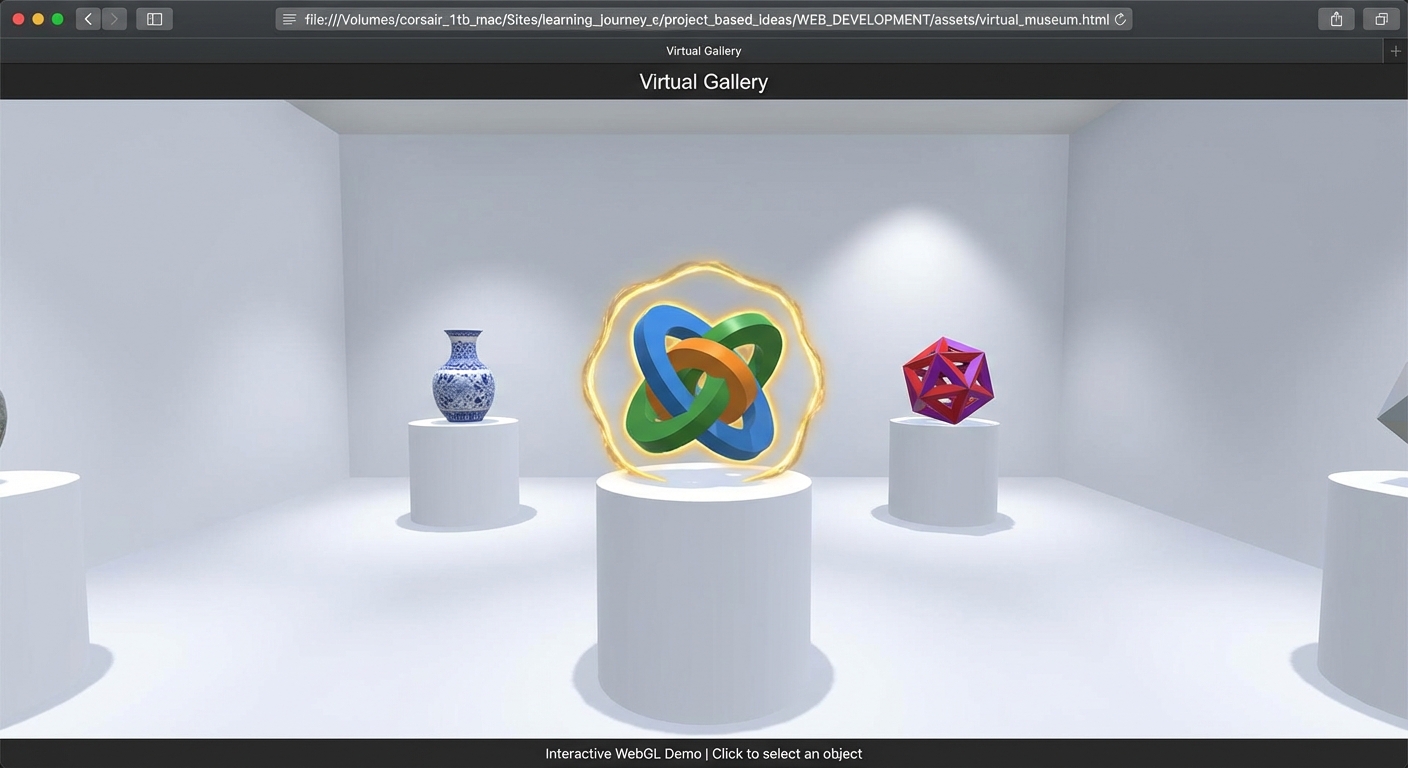

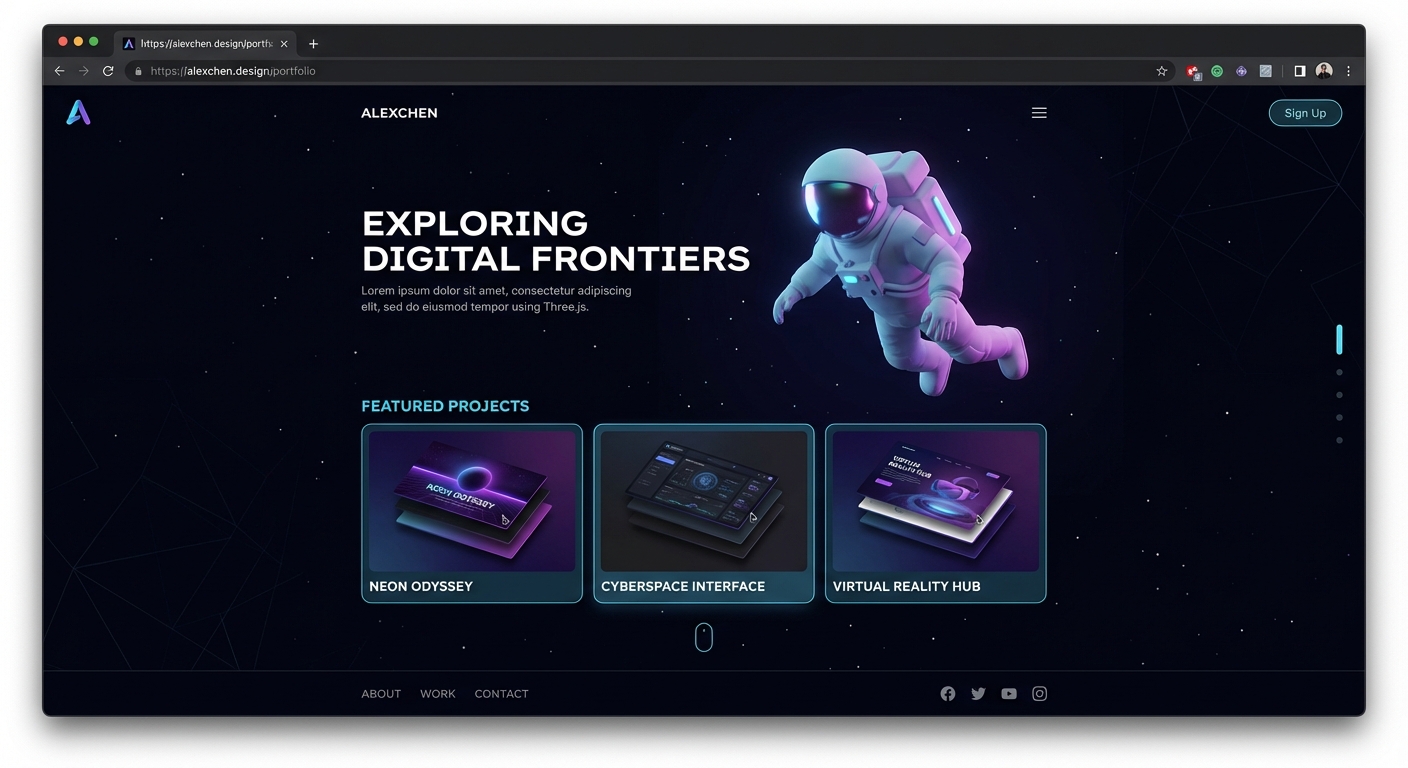

- An interactive 3D portfolio website (everything combined)

Scope

In scope:

- Three.js core API (Scene, Camera, Renderer, Mesh, Material, Light, etc.)

- The WebGL rendering pipeline and what happens on the GPU

- GLSL basics: vertex shaders, fragment shaders, uniforms, varyings

- Physics integration (cannon-es and Rapier)

- Post-processing with EffectComposer

- Performance optimization (instancing, LOD, draw call reduction)

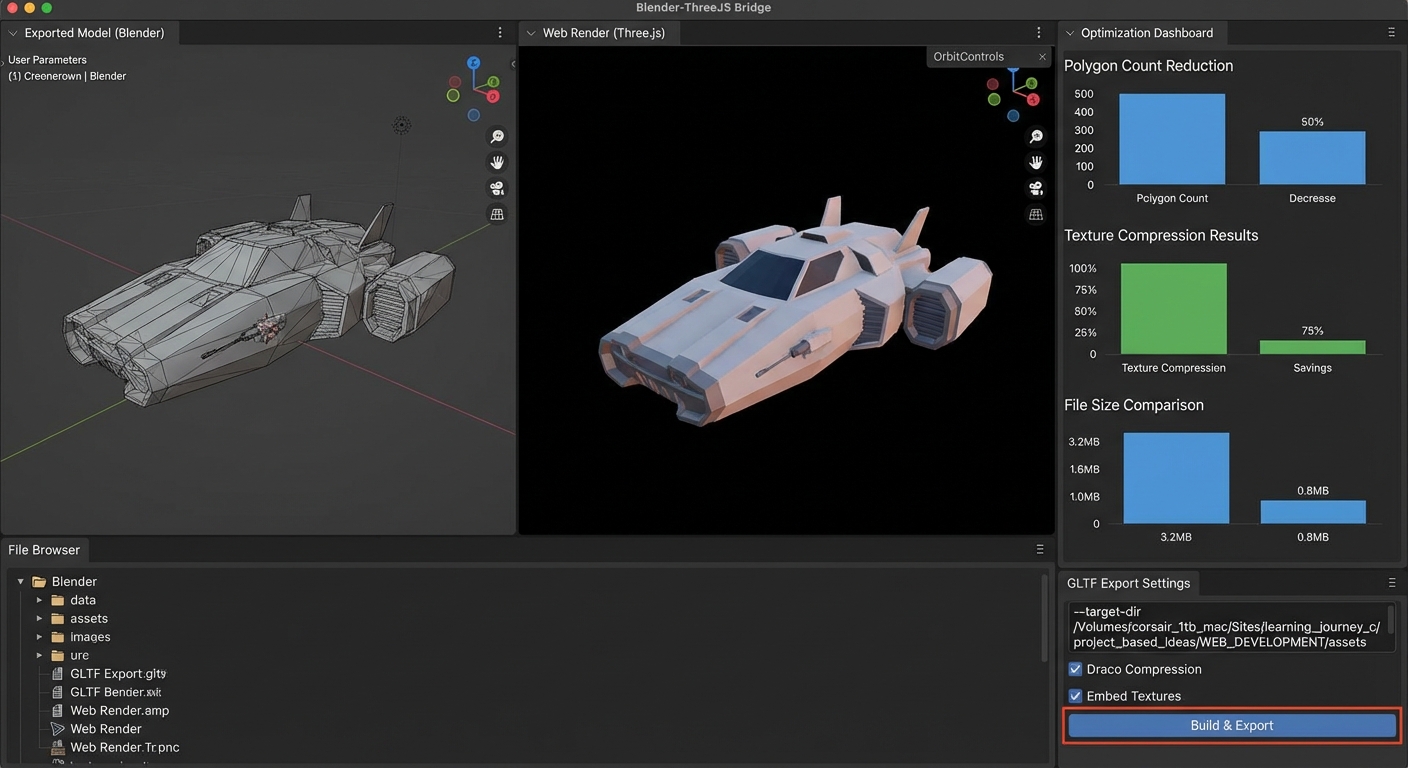

- Asset loading (GLTF/GLB, Draco, textures)

- Responsive design and device pixel ratio

Out of scope:

- React Three Fiber (R3F) – a wrapper around Three.js for React apps, not Three.js itself

- 3D modeling in Blender (we use pre-made models; modeling is a separate discipline)

- Production deployment, CI/CD, and hosting infrastructure

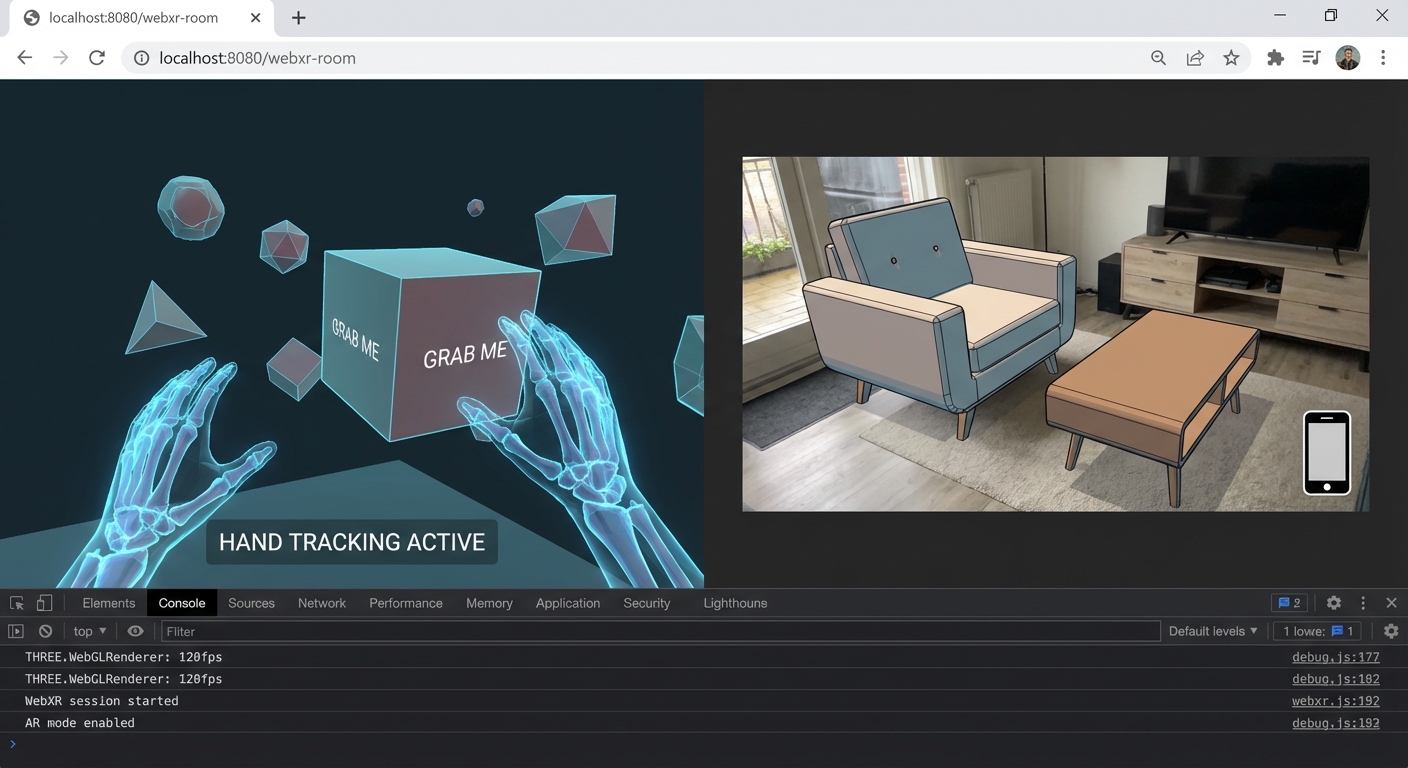

- WebXR/VR (a separate specialization)

- Three.js node material system / TSL (Three Shading Language) – bleeding edge, still stabilizing

Why Three.js over alternatives? Babylon.js is a full-featured game engine with built-in physics, audio, and a visual editor. PlayCanvas offers a cloud-based collaborative editor. A-Frame provides HTML-like markup for VR scenes. These are all valid tools. We focus on Three.js because it is the most widely adopted, has the largest ecosystem of tutorials and examples, gives you the most control over the rendering pipeline, and teaches you transferable 3D graphics concepts that apply to any engine or framework. If you understand Three.js deeply, you can pick up any alternative quickly.

The Big Picture: From JavaScript to Pixels

Every Three.js application follows the same pipeline, from your JavaScript code to colored pixels on the HTML canvas:

YOUR JAVASCRIPT CODE

|

v

+------------------+ +------------------+ +------------------+

| | | | | |

| Scene Graph |---->| Camera |---->| Renderer |

| | | | | (WebGLRenderer) |

| - Meshes | | - Projection | | |

| - Lights | | - View matrix | | Traverses scene |

| - Groups | | - Frustum | | Sorts objects |

| - Cameras | | | | Issues draw |

| | | | | calls to GPU |

+------------------+ +------------------+ +--------+---------+

|

GPU PIPELINE |

+--------------------------------------+

|

v

+------------------+ +------------------+ +------------------+

| | | | | |

| Vertex Shader |---->| Rasterizer |---->| Fragment Shader |

| | | | | |

| Runs per-vertex | | Converts | | Runs per-pixel |

| Transforms | | triangles to | | Computes final |

| positions from | | fragments | | color using |

| model space to | | (potential | | lighting, tex- |

| clip space | | pixels) | | tures, uniforms |

| | | | | |

+------------------+ +------------------+ +--------+---------+

|

v

+------------------+

| |

| Framebuffer |

| |

| Depth testing |

| Blending |

| Final pixels |

| |

+--------+---------+

|

v

+------------------+

| |

| HTML <canvas> |

| |

| The image you |

| see on screen |

| |

+------------------+

This pipeline runs 60 times per second (or more on high-refresh displays). Every frame, Three.js traverses your scene graph, determines what is visible to the camera, and sends drawing instructions to the GPU. The GPU then runs your vertex shader on every vertex, rasterizes the resulting triangles into pixel-sized fragments, runs the fragment shader on each fragment to compute its color, and writes the final image to the canvas.

Understanding this pipeline is the single most important mental model in this entire guide. Every project you build exercises different stages of it. When something goes wrong – a mesh is invisible, a shadow is missing, a material looks flat – you will debug by asking: “which stage of the pipeline is broken?”

Here is a simplified view of the same pipeline as a linear flow, useful for quick mental reference:

JavaScript --> Scene + Camera --> Renderer traversal --> Vertex Shader

| |

| (per vertex)

| |

v v

Animation Loop Rasterization

(requestAnimationFrame) |

| (triangles ->

+--> Update transforms fragments)

+--> Step physics |

+--> mixer.update(delta) v

+--> renderer.render() Fragment Shader

or composer.render() (per pixel)

|

v

Depth Test + Blend

|

v

Post-Processing

(optional)

|

v

<canvas>

How to Use This Guide

Read the Theory Primer first. It is structured as a mini-book with chapters that build on each other, from the rendering pipeline to shaders and post-processing. You do not need to memorize every detail, but you need the mental models before you start building. Skim once, then revisit chapters as each project demands deeper understanding.

Work the projects in order on your first pass. Projects 1-3 establish fundamentals. Projects 4-7 introduce intermediate techniques. Projects 8-12 tackle advanced topics. Project 13 synthesizes everything. Each project explicitly lists which primer chapters it depends on, so you can always jump back for a refresher.

Before coding each project, read two sections carefully:

-

The Core Question You Are Answering – This frames what you should be learning, not just what you should be building. If you cannot answer the core question after finishing the project, you missed the point.

-

The Thinking Exercise – This asks you to diagram, trace, or reason about the system before writing any code. Thinking first, coding second produces deeper understanding.

Use debugging tools from day one.

- lil-gui – Add sliders and color pickers for every tunable parameter (material roughness, light intensity, camera FOV). Tweak values live instead of recompiling.

- Stats.js – Display the FPS counter as an overlay. If you drop below 30 FPS, something is wrong.

- renderer.info – Log draw calls and triangle counts to the console after each frame. Watch these numbers and understand why they change.

- Spector.js (Chrome extension) – Capture a single frame and inspect every GPU operation. Invaluable when debugging shader issues or shadow artifacts.

Validate each project against its Definition of Done. Every project ends with a concrete checklist. Do not move on until every item is checked. “It mostly works” is not done. The Definition of Done is designed to verify that you actually learned the concept, not just produced output.

When you get stuck, use the Hints in Layers. Each project provides 4 levels of hints, from high-level direction to pseudocode. Try to solve with Hint 1 only. Drop to Hint 2 if stuck for more than 30 minutes. Use Hints 3-4 only as a last resort.

Keep a learning journal. After each project, write down: (1) what the core concept was, (2) what surprised you, (3) what you would do differently. This reflection solidifies understanding and creates a personal reference you can revisit. The interview questions at the end of each project test whether you can articulate what you learned – practice answering them out loud.

Pair projects with book chapters. Each project lists specific book chapters and references. Reading the relevant chapter before or during the project provides theoretical depth that pure coding alone misses. The books recommended in this guide are not optional padding – they explain the “why” behind the “how.”

Prerequisites & Background Knowledge

Essential Prerequisites (Must Have)

- JavaScript (ES6+): You must be comfortable with ES6 modules (

import/export), classes, arrow functions, destructuring, template literals,async/await, and Promises. Three.js is a JavaScript library – if you fight the language, you will not learn the 3D concepts. - HTML & CSS basics: You need to create an HTML page, link a script, and style a canvas element. Nothing fancy.

- Basic math: Coordinates (x, y, z), angles (degrees vs radians), basic trigonometry (

sin,cosfor circular motion), and an intuitive sense of what a vector is (direction + magnitude). You do not need linear algebra expertise, but you need comfort with the idea that (1, 0, 0) means “one unit in the x direction.”

Recommended Reading: “Math for Programmers” by Paul Orland - Chapters 2-5 (vectors, 3D coordinates, transformations, matrices)

Helpful But Not Required

- TypeScript – Three.js has excellent TypeScript definitions. Using TS catches many errors (e.g., passing a string where a Color is expected). Projects can be done in either JS or TS.

- Linear algebra – Understanding 4x4 transformation matrices, dot products, and cross products will deepen your understanding of the vertex shader stage. You will pick this up naturally through Projects 2, 4, and 8.

- Any experience with a game engine (Unity, Unreal, Godot) – The concepts (scene graph, materials, lights, cameras) transfer directly.

Self-Assessment Questions

Before starting, make sure you can answer these:

- What does

const mesh = new MyClass({ color: 0xff0000 })do in JavaScript? (Object instantiation with an options object) - What is the difference between

let,const, andvar? (Block scoping, immutability of binding) - Can you write a function that takes a callback and calls it after a delay? (

setTimeout, Promises) - What does

Math.sin(angle)return whenangle = Math.PI / 2? (1.0 – and the angle is in radians, not degrees) - If an object is at position (3, 0, 0) and you add the vector (0, 2, 0), where is it now? (3, 2, 0)

If any of these are unclear, spend a day reviewing JavaScript fundamentals before starting.

Development Environment Setup

Required Tools:

- Node.js – v18+ (LTS recommended). Used to run Vite and manage packages.

- Vite – Modern build tool that serves Three.js modules with hot reload. Far faster than Webpack for development.

- A code editor – VS Code recommended. Install the “Shader languages support” extension for GLSL syntax highlighting.

- A modern browser – Chrome or Firefox with WebGL 2.0 support (97%+ of browsers).

Recommended Tools:

- lil-gui – Debug parameter panel (Projects 1-13)

- Stats.js – FPS overlay (Projects 1-13)

- Spector.js – WebGL frame debugger (Projects 8+)

- Blender – For viewing/exporting GLTF models (Project 6). Not required for modeling from scratch.

Testing Your Setup:

$ node --version

v20.x.x

$ npm create vite@latest test-threejs -- --template vanilla

$ cd test-threejs

$ npm install three lil-gui

$ npm run dev

VITE v5.x.x ready in 200ms

-> Local: http://localhost:5173/

Open http://localhost:5173/ in your browser. If you see the Vite starter page, your environment is ready.

Time Investment

| Category | Time per Project | Projects |

|---|---|---|

| Simple (fundamentals) | 4-8 hours | 1, 2, 3 |

| Moderate (intermediate) | 10-20 hours | 4, 5, 6, 7, 10 |

| Complex (advanced) | 20-40 hours | 8, 9, 11, 12, 13 |

| Total Sprint | ~200-350 hours | 3-5 months |

Important Reality Check

3D graphics is a deep field. You will encounter moments where a mesh is invisible and you have no idea why. Shadows will look wrong. Shaders will compile but produce black screens. This is normal. The rendering pipeline has many stages, and a bug at any stage can produce confusing results. The Theory Primer and the Debugging sections in each project exist specifically to help you develop a systematic debugging approach. Do not skip them. The difference between a frustrated beginner and a productive 3D developer is the ability to ask: “Which stage of the pipeline is this bug in?”

Common early frustrations and their root causes:

+------------------------------+---------------------------------------+

| Symptom | Likely Cause |

+------------------------------+---------------------------------------+

| Black screen, no errors | Camera inside the object, or object |

| | at wrong position, or no lights |

+------------------------------+---------------------------------------+

| Object visible but all black | No lights in the scene (and material |

| | is not MeshBasicMaterial) |

+------------------------------+---------------------------------------+

| Texture not appearing | Texture path wrong, or texture not |

| | loaded before render |

+------------------------------+---------------------------------------+

| Shadow acne (stripy shadows) | Shadow bias too small -- adjust |

| | light.shadow.bias |

+------------------------------+---------------------------------------+

| Animation runs at different | Not using delta time for movement |

| speeds on different machines | |

+------------------------------+---------------------------------------+

| Shader compiles but shows | Fragment shader outputs vec4(0,0,0,1) |

| only black | -- check your math and uniforms |

+------------------------------+---------------------------------------+

| Performance drops suddenly | Too many draw calls, not using |

| | InstancedMesh for repeated objects |

+------------------------------+---------------------------------------+

Every one of these issues is addressed in detail within the relevant Theory Primer chapter and project. Keep this table as a quick reference when debugging.

Big Picture / Mental Model

Before diving into individual concepts, you need to see how everything connects. The following diagram shows every major Three.js subsystem and how data flows between them:

+===========================================================================+

| YOUR APPLICATION CODE |

| |

| - Create scene, camera, renderer |

| - Build meshes (geometry + material) |

| - Add lights, load models, set up controls |

| - Run the animation loop |

+====================================+======================================+

|

v

+===========================================================================+

| SCENE GRAPH (Tree) |

| |

| Scene (root) |

| / | \ |

| Group Mesh DirectionalLight |

| / \ | |

| Mesh Mesh | |

| | | | |

| (child (child (Geometry + Material = visible object) |

| transforms | |

| inherit v |

| parent) Each Mesh = Geometry + Material |

| |

+====================================+======================================+

|

+--------+-----------+-----+------+-----------+

| | | | |

v v v v v

+-----------+ +-------+ +----------+ +-------+ +----------+

| Geometry | |Material| | Textures | | Lights| | Camera |

| | | | | | | | | |

| Vertices | | Color | | Albedo | | Amb. | | FOV |

| Normals | | Rough. | | Normal | | Dir. | | Aspect |

| UVs | | Metal. | | Rough. | | Point | | Near/Far |

| Indices | | Emiss. | | AO | | Spot | | Frustum |

| (shape) | | (look) | | Env.Map | | Rect. | | (lens) |

+-----------+ +-------+ +----+-----+ +---+---+ +----+-----+

| | | |

v v v v

+--------------------------------------------------+

| Material + Texture Binding |

| Textures feed into material properties |

| (map -> color, normalMap -> surface detail, |

| roughnessMap -> per-pixel roughness, etc.) |

+---------------------------+----------------------+

|

+=======================================v===========================+

| RENDERER (WebGLRenderer) |

| |

| 1. Traverse scene graph |

| 2. Frustum cull (skip objects outside camera view) |

| 3. Sort: opaque front-to-back, transparent back-to-front |

| 4. For each visible object: |

| - Bind shader program (vertex + fragment) |

| - Upload uniforms (matrices, colors, light data) |

| - Bind textures to GPU texture units |

| - Bind vertex buffers (positions, normals, UVs) |

| - Issue draw call to GPU |

| |

+===================================+==============================+

|

v

+===========================================================================+

| GPU PIPELINE |

| |

| Vertex Shader --> Primitive Assembly --> Rasterization |

| | | |

| (per vertex: (triangles -> |

| model space fragments) |

| -> clip space) | |

| v |

| Fragment Shader |

| | |

| (per pixel: |

| lighting, |

| textures, |

| final color) |

| | |

| v |

| Depth Test + Blend |

| | |

| v |

| Framebuffer |

+===================================+======================================+

|

v

+===========================================================================+

| POST-PROCESSING (Optional) |

| |

| EffectComposer reads framebuffer as a texture, applies: |

| Bloom -> DOF -> Color Grading -> FXAA -> Final Output |

| |

| Each effect is a full-screen shader pass (ping-pong buffers) |

+===================================+======================================+

|

v

+------------------+

| HTML <canvas> |

| (final pixels) |

+------------------+

+=============================================================+

| PARALLEL WORLD: PHYSICS (Optional) |

| |

| Physics Engine (cannon-es / Rapier) |

| - Maintains its own world with rigid bodies |

| - Steps simulation at fixed time intervals (1/60s) |

| - Each frame: copy physics body positions/rotations |

| to the corresponding Three.js meshes |

| - Physics shapes are SIMPLER than visual meshes |

| (box collider for a detailed car model) |

| |

| Physics World ----sync each frame----> Three.js Meshes |

+=============================================================+

+=============================================================+

| INTERACTION: RAYCASTING |

| |

| Mouse click --> NDC coordinates --> Ray from camera |

| --> Test intersection with scene objects |

| --> Nearest hit = clicked object |

| |

| Used for: picking, hover effects, drag-and-drop, |

| shooting, UI interaction in 3D space |

+=============================================================+

+=============================================================+

| ANIMATION SYSTEM |

| |

| requestAnimationFrame (browser heartbeat, ~60fps) |

| | |

| v |

| Clock.getDelta() --> delta time (seconds since last frame) |

| | |

| +--> Update transforms: rotation += speed * delta |

| +--> Update AnimationMixer: mixer.update(delta) |

| +--> Step physics: world.step(1/60, delta) |

| +--> Render: renderer.render(scene, camera) |

| or composer.render() for post-processing |

+=============================================================+

How the pieces connect:

-

Scene Graph is the organizational backbone. Everything exists within the scene tree. Parent-child relationships define how transformations cascade – moving a parent Group moves all its children. This is how you build articulated structures like a robot arm or a solar system.

-

Geometry defines shape (vertices, triangles, normals, UVs). Material defines appearance (color, roughness, metalness, shading model). A Mesh combines the two into a renderable object. This separation means you can reuse geometries with different materials, or share one material across many meshes.

-

Textures feed into materials. A single

MeshStandardMaterialcan accept a color map, normal map, roughness map, metalness map, ambient occlusion map, emissive map, displacement map, and environment map – each adding a layer of visual detail without increasing geometric complexity. -

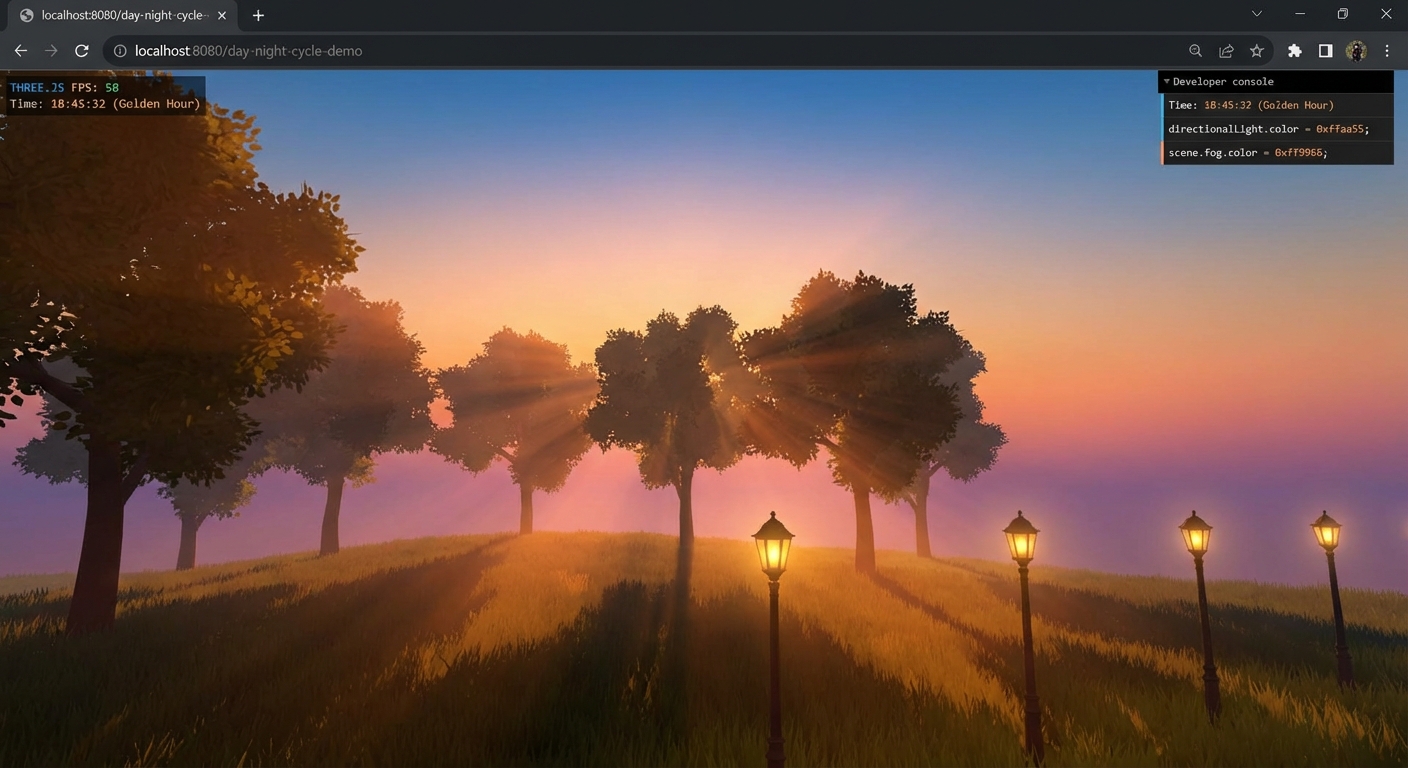

Lights define illumination. Different light types (ambient, directional, point, spot) simulate different real-world light sources. Shadow-casting lights render the scene from their own perspective to create shadow maps – essentially depth textures that the main render uses to determine which fragments are in shadow.

-

Camera defines the viewpoint and projection. Perspective cameras mimic human vision (distant objects shrink). Orthographic cameras maintain uniform scale (useful for 2D games, architectural plans). The camera’s frustum determines which objects are visible and which are culled.

-

Renderer orchestrates the entire process. It traverses the scene graph, determines visibility, sorts objects for correct rendering order, and issues draw calls to the GPU. Each draw call sends a batch of triangles plus their shader program, uniforms, and textures to the GPU.

-

The GPU pipeline runs the vertex shader (per vertex), assembles triangles, rasterizes them into fragments, runs the fragment shader (per fragment), performs depth testing and blending, and writes the final pixels to the framebuffer.

-

Post-processing intercepts the framebuffer before it reaches the canvas. Each effect pass reads the previous result as a texture, applies a full-screen shader, and writes to a new buffer. Effects are chained: bloom, then depth of field, then color grading, then anti-aliasing.

-

Physics runs in a completely separate world. The physics engine knows nothing about Three.js – it has its own rigid bodies, colliders, and forces. Each frame you step the physics simulation, then copy each body’s position and rotation to the corresponding Three.js mesh. This decoupling is deliberate: physics runs at a fixed time step (1/60s) regardless of rendering frame rate, ensuring stable simulation.

-

Raycasting bridges user input and the 3D world. A ray is cast from the camera through the mouse position into the scene. Intersection tests determine which object was clicked or hovered. This is how you build interactive 3D applications.

-

The Animation Loop ties everything together.

requestAnimationFramecalls your update function ~60 times per second. Each frame you: compute delta time, update object transforms, step physics, update animation mixers, and render. Delta time ensures consistent speed regardless of frame rate.

The golden rule of Three.js architecture: the Renderer owns the “what” (drawing), but your animation loop owns the “when” and “how” (updating). Separating update logic from rendering logic keeps your code clean and debuggable. When performance drops, you know to look at either your update code (CPU-bound) or your rendering (GPU-bound), never both at once.

Theory Primer

The following chapters cover every core concept you need before starting the projects. Read them as a mini-book – each chapter builds on the previous ones. The concepts are ordered from foundational (rendering pipeline, scene graph) to advanced (shaders, post-processing, performance optimization).

Each chapter includes definitions, a deep dive explanation, ASCII diagrams, concrete examples, misconceptions to avoid, and check-your-understanding questions. The chapters map directly to the Concept Summary Table and Project-to-Concept Map that follow the primer.

Do not try to memorize everything in one pass. Read through once for the mental models, then revisit specific chapters as each project demands deeper understanding. The primer is your reference manual for the entire sprint.

Chapter 1: The Rendering Pipeline (Scene, Camera, Renderer)

Fundamentals

Every Three.js application rests on three pillars: a Scene that holds your 3D world, a Camera that defines the viewpoint, and a Renderer that converts the 3D scene into a 2D image on your screen. These three objects form what is often called the “Holy Trinity” of Three.js. Without any one of them, nothing appears on screen. The Scene is a container – a tree structure (the scene graph) where you place meshes, lights, helpers, and even other scenes. The Camera defines which part of that world is visible and how it is projected (perspective or orthographic). The Renderer takes the scene and camera, traverses every visible object, translates geometry and material data into WebGL draw calls, and sends those instructions to the GPU. The GPU executes vertex and fragment shaders in parallel across thousands of cores, producing the final pixels that appear on the HTML canvas element. This entire process repeats every frame inside a render loop driven by requestAnimationFrame, typically targeting 60 frames per second. Understanding this pipeline – from JavaScript objects to GPU shaders to pixels on screen – is the single most important mental model you need before touching any Three.js project.

Deep Dive

The rendering pipeline in Three.js is a multi-stage process that bridges the gap between high-level JavaScript objects and low-level GPU instructions. Let us trace the full journey of a single frame.

Stage 1: Scene Graph Traversal. When you call renderer.render(scene, camera), the renderer walks the scene graph – a tree rooted at the Scene object. Every node in this tree is an Object3D (or a subclass like Mesh, Light, Group). The renderer collects all renderable objects, computing their world matrices by multiplying each object’s local matrix with its parent’s world matrix. This is how position, rotation, and scale inheritance works: a child mesh at position (1, 0, 0) whose parent group is at (5, 0, 0) ends up at world position (6, 0, 0).

Stage 2: Frustum Culling. Before sending anything to the GPU, the renderer checks each object against the camera’s frustum – the 3D volume visible to the camera. Objects entirely outside this volume are skipped. This is an automatic optimization that can save significant GPU work in large scenes. The frustum is defined by the camera’s near plane, far plane, field of view (for perspective), and aspect ratio.

Stage 3: Sorting. Opaque objects are sorted front-to-back. This seems counterintuitive, but it is an optimization: the GPU’s depth buffer (z-buffer) can skip fragment shading for pixels that are behind already-rendered surfaces (early z-rejection). Transparent objects are sorted back-to-front because they must be blended in the correct order – you need to see through the closer transparent object to the one behind it (painter’s algorithm).

Stage 4: GPU State Setup. For each renderable object, the renderer sets up the GPU state. This means binding the correct shader program (compiled from the material’s GLSL code), uploading uniform values (model/view/projection matrices, light positions, material colors, time values), binding textures to texture units, and binding the geometry’s vertex buffers (position, normal, UV data stored in typed arrays). Each unique combination of geometry + material typically requires its own draw call.

Stage 5: Draw Calls. The renderer issues draw calls to the GPU via WebGL’s drawElements() or drawArrays() commands. Each draw call tells the GPU to process a set of triangles through the shader pipeline. This is where the CPU hands off work to the GPU.

Stage 6: GPU Shader Pipeline. On the GPU, the vertex shader runs first, processing each vertex to transform it from model space to clip space. The key transformation is: gl_Position = projectionMatrix * modelViewMatrix * vec4(position, 1.0). After vertex processing, the GPU assembles vertices into triangles, clips them against the view frustum, and rasterizes them into fragments (potential pixels). The fragment shader then runs for each fragment, computing its final color based on material properties, lighting, textures, and any custom logic. Finally, depth testing determines which fragments are visible (closest to the camera), and the results are written to the framebuffer.

Stage 7: Display. The framebuffer contents are displayed on the HTML canvas element. If double buffering is active (default), the GPU swaps the back buffer to the front buffer, making the new frame visible without tearing.

The render loop ties this all together. You use requestAnimationFrame(animate) to schedule the next frame. This browser API targets the display’s refresh rate (typically 60Hz, but 120Hz or 144Hz on modern monitors) and automatically pauses when the tab is not visible, saving battery and CPU resources. Inside the animate function, you update object states (positions, rotations, animations), then call renderer.render(scene, camera) to produce the next frame.

A critical concept is the draw call. Each draw call has overhead on the CPU side (setting up state, uploading uniforms, binding buffers). The GPU itself is massively parallel and can process millions of triangles per frame, but it has to wait for the CPU to issue each draw call. This is why reducing draw calls (through instancing, geometry merging, or material sharing) is often more impactful than reducing triangle count.

WebGL is the underlying graphics API that Three.js uses by default. WebGL is based on OpenGL ES 2.0/3.0 and provides a JavaScript interface to the GPU. Three.js abstracts away the complexity of WebGL – you do not need to write raw shader code, manage buffer objects, or handle state machines manually. However, understanding that WebGL exists underneath helps you debug performance issues and appreciate why certain Three.js patterns exist. As of late 2025, Three.js also supports WebGPU as an alternative backend through WebGPURenderer, offering reduced CPU overhead and compute shader support.

How This Fits in Projects

Every single project in this guide uses the rendering pipeline. Project 1 (Spinning Geometry Gallery) establishes the basic Scene/Camera/Renderer pattern. Project 4 (Haunted House) adds complex lighting to the pipeline. Project 8 (Shader Effects) dives into the GPU shader stages directly. Project 12 (Performance Dashboard) forces you to optimize draw calls and understand the full pipeline from a performance perspective.

Definitions & Key Terms

- Scene: The root container holding all objects, lights, and cameras in a scene graph tree structure

- Camera: Defines the viewpoint and projection type (perspective or orthographic) for rendering

- Renderer (WebGLRenderer): Converts the 3D scene into 2D pixels on an HTML canvas using the GPU

- Render Loop: A continuous cycle using requestAnimationFrame that updates state and re-renders each frame

- requestAnimationFrame (rAF): Browser API that schedules a callback before the next display repaint, targeting the monitor’s refresh rate

- Draw Call: A single CPU-to-GPU instruction to render a batch of triangles; each unique geometry+material combination typically requires one

- Frustum: The 3D volume visible to the camera; objects outside it are culled

- Framebuffer: GPU memory buffer where rendered pixels are stored before display

- Depth Buffer (Z-Buffer): Per-pixel depth values used to determine which surfaces are visible

- WebGL: JavaScript API for GPU-accelerated 2D and 3D rendering in browsers, based on OpenGL ES

Mental Model Diagram

THE THREE.JS RENDERING PIPELINE

================================

JavaScript World GPU World

================ =========

+----------+

| Scene | (scene graph root)

| +-----+ |

| |Mesh | |

| |Mesh | | renderer.render() +------------------+

| |Light| | ========================> | Vertex Shader |

| |Group| | | (per vertex) |

| +-----+ | +--------+---------+

+----------+ |

v

+----------+ +------------------+

| Camera | | Triangle Assembly|

| (viewpt) | | + Rasterization |

+----------+ +--------+---------+

|

+----------+ v

| Renderer | +------------------+

| (WebGL) | | Fragment Shader |

| +------+ | | (per pixel) |

| |Canvas| | +--------+---------+

| +------+ | |

+----------+ v

^ +------------------+

| | Depth Test + |

+---------------------------------| Framebuffer |

final pixels displayed +------------------+

THE RENDER LOOP

===============

requestAnimationFrame(animate)

|

v

+------------------+

| Update objects | (rotate, move, animate)

| Update clock | (delta time)

+--------+---------+

|

v

+------------------+

| renderer.render | (scene, camera)

| (scene, camera) |

+--------+---------+

|

v

+------------------+

| GPU processes | (~16.6ms for 60fps)

| frame |

+--------+---------+

|

v

+------------------+

| Display on |

| canvas |

+------------------+

|

+-------> next frame (loop back to top)

SCENE GRAPH TRAVERSAL ORDER

============================

Scene (root)

|

+-- Group A (matrixWorld = Scene.matrix * A.matrix)

| |

| +-- Mesh 1 (matrixWorld = A.matrixWorld * Mesh1.matrix)

| +-- Mesh 2 (matrixWorld = A.matrixWorld * Mesh2.matrix)

|

+-- DirectionalLight

|

+-- Mesh 3 (matrixWorld = Scene.matrix * Mesh3.matrix)

DRAW CALL BREAKDOWN

====================

Object Geometry Material Draw Calls

------ -------- -------- ----------

Mesh 1 BoxGeo Red Mat 1

Mesh 2 BoxGeo Blue Mat 1 (different material = new call)

Mesh 3 SphereGeo Red Mat 1 (different geometry = new call)

---

Total: 3 draw calls

vs. InstancedMesh (1000 boxes, same material): 1 draw call!

How It Works

Step-by-step process for rendering a single frame:

- requestAnimationFrame fires – the browser signals it is time for a new frame

- Clock.getDelta() called – compute time elapsed since last frame (delta time)

- Update scene state – move objects, advance animations, update physics using delta time

- renderer.render(scene, camera) called – begins the pipeline

- Scene graph traversal – renderer walks the tree, computes world matrices for all objects

- Frustum culling – objects outside camera view are skipped

- Sort objects – opaque front-to-back, transparent back-to-front

- For each object: set GPU state – bind shader program, upload uniforms, bind textures and vertex buffers

- Issue draw call – CPU tells GPU to process triangles

- GPU vertex shader – transforms vertices to screen coordinates

- Rasterization – triangles converted to pixel-sized fragments

- GPU fragment shader – computes color for each fragment

- Depth testing – determines which fragments are visible

- Write to framebuffer – final pixels stored in GPU memory

- Buffer swap – new frame displayed on canvas

- Loop repeats – requestAnimationFrame schedules next iteration

Invariants:

- Scene, Camera, and Renderer must all exist before rendering

- The renderer’s canvas must be attached to the DOM to be visible

camera.updateProjectionMatrix()must be called after changing FOV, aspect, near, or far- The render loop must call

renderer.render()every frame for continuous animation

Failure Modes:

- Blank canvas: forgot to call

renderer.render()or never started the animation loop - Black screen: no lights in the scene (with non-Basic materials)

- Stretched image: aspect ratio mismatch between camera and canvas

- Performance degradation: too many draw calls, unculled objects, or oversized textures

Minimal Concrete Example

// Pseudocode: Complete minimal Three.js application

// 1. Create the Holy Trinity

scene = new Scene()

camera = new PerspectiveCamera(

75, // FOV in degrees

window.innerWidth / window.innerHeight, // aspect ratio

0.1, // near plane

1000 // far plane

)

renderer = new WebGLRenderer({ antialias: true })

renderer.setSize(window.innerWidth, window.innerHeight)

renderer.setPixelRatio(Math.min(window.devicePixelRatio, 2))

document.body.appendChild(renderer.domElement)

// 2. Add objects to scene

geometry = new BoxGeometry(1, 1, 1)

material = new MeshStandardMaterial({ color: 0x00ff00 })

cube = new Mesh(geometry, material)

scene.add(cube)

// 3. Add light (required for Standard/Physical materials)

light = new DirectionalLight(0xffffff, 1)

light.position.set(5, 5, 5)

scene.add(light)

// 4. Position camera

camera.position.z = 5

// 5. Handle window resize

window.addEventListener('resize', () => {

camera.aspect = window.innerWidth / window.innerHeight

camera.updateProjectionMatrix()

renderer.setSize(window.innerWidth, window.innerHeight)

})

// 6. Animation loop

clock = new Clock()

function animate():

requestAnimationFrame(animate)

delta = clock.getDelta()

cube.rotation.y += 1.0 * delta // 1 radian per second

renderer.render(scene, camera)

animate()

Common Misconceptions

- “Three.js IS WebGL”: Three.js is a library that abstracts WebGL (and now WebGPU). You can use raw WebGL without Three.js. Three.js provides a scene graph, material system, loaders, and other high-level features that WebGL does not have.

- “The render loop runs at exactly 60fps”: requestAnimationFrame targets the display’s refresh rate (60Hz, 120Hz, 144Hz) but the actual frame rate depends on GPU/CPU load. Heavy scenes will drop frames. There is no guarantee.

- “More objects always means slower rendering”: Performance depends primarily on draw calls and shader complexity. 10,000 instanced objects (1 draw call) can render faster than 100 unique objects (100 draw calls). The bottleneck is usually CPU-side draw call overhead, not GPU triangle throughput.

- “You only need Scene and Renderer”: Without a Camera, the renderer has no viewpoint. You will get a black screen or an error. All three are required.

Check-Your-Understanding Questions

- What are the three core objects required to render anything in Three.js, and what role does each play?

- Why does Three.js sort opaque objects front-to-back but transparent objects back-to-front?

- What happens inside the GPU between a draw call being issued and pixels appearing on screen?

- Why is reducing draw calls often more impactful than reducing triangle count?

- What does requestAnimationFrame do, and why is it preferred over setInterval for animation?

Check-Your-Understanding Answers

- Scene (container for all objects, lights, and cameras), Camera (defines the viewpoint and projection), and Renderer (converts the 3D scene to 2D pixels on a canvas). Without all three, nothing renders.

- Opaque objects front-to-back enables early z-rejection – the GPU skips fragment shading for pixels behind already-rendered surfaces. Transparent objects back-to-front ensures correct alpha blending – you must render distant transparent objects first so closer ones blend on top correctly.

- The vertex shader transforms each vertex to screen coordinates, triangles are assembled and rasterized into fragments, the fragment shader computes each fragment’s color, depth testing determines visibility, and the result is written to the framebuffer.

- Each draw call has CPU overhead: binding shaders, uploading uniforms, binding buffers. The GPU can process millions of triangles in parallel, but it must wait for the CPU to set up each draw call. Reducing draw calls via instancing or merging removes this CPU bottleneck.

- requestAnimationFrame schedules a callback before the next browser repaint, syncing with the display refresh rate. It avoids tearing (unlike setInterval), automatically pauses when the tab is hidden (saving battery/CPU), and adapts to the monitor’s refresh rate.

Real-World Applications

- E-commerce product viewers: Spinning 3D models of shoes, furniture, electronics using the basic render pipeline

- Data visualization dashboards: Rendering thousands of data points as 3D objects with the scene graph

- Architectural walkthroughs: Real-time rendering of building interiors with camera navigation

- Browser-based games: Continuous render loops with game state updates every frame

- Interactive art installations: GPU-accelerated visuals driven by user input

Where You Will Apply It

- Project 1 (Spinning Geometry Gallery): Establish the Scene/Camera/Renderer pattern, build your first render loop

- Project 2 (Solar System Orrery): Nested scene graph with hierarchical transformations

- Project 4 (Haunted House): Complex scene with multiple lights integrated into the pipeline

- Project 8 (Shader Effects): Write custom vertex and fragment shaders that run inside this pipeline

- Project 12 (Performance Dashboard): Optimize the pipeline by monitoring draw calls and frame timing

- All other projects build on this foundation

References

- Three.js official documentation: https://threejs.org/docs/

- Discover Three.js book, Chapter 1 - The Rendering Pipeline: https://discoverthreejs.com/book/first-steps/first-scene/

- “WebGL Fundamentals” by Gregg Tavares: https://webglfundamentals.org/

- “Interactive Computer Graphics” by Edward Angel - Ch. 1-2 (rendering pipeline concepts)

- Three.js Journey by Bruno Simon - Lesson 3 (Basic Scene): https://threejs-journey.com/lessons/basic-scene

Key Insight

The entire Three.js rendering pipeline boils down to one repeating cycle: update state, traverse the scene graph, issue draw calls, let the GPU shade vertices and fragments, display the result – and the performance of your application is determined by how efficiently you manage each stage of this cycle.

Summary

The rendering pipeline is the foundation of every Three.js application. It begins with three JavaScript objects (Scene, Camera, Renderer) and ends with pixels on an HTML canvas, with the GPU’s vertex and fragment shaders doing the heavy computational work in between. The render loop driven by requestAnimationFrame provides the heartbeat that keeps this pipeline running at the display’s refresh rate. Understanding draw calls, frustum culling, and the distinction between CPU-side setup and GPU-side parallel execution is essential for writing performant 3D applications.

Homework/Exercises

-

Trace the Pipeline: Draw your own diagram of the rendering pipeline, labeling each stage from JavaScript object creation to pixels on screen. Include where the CPU hands off work to the GPU. Annotate each stage with what data flows between stages (vertices, fragments, pixels).

-

Draw Call Counting: Given a scene with 5 cubes (same geometry, same material), 3 spheres (same geometry, different materials for each), and 1 plane (unique geometry, shared material with cubes), calculate the minimum number of draw calls. Then describe how you could reduce the count using InstancedMesh or geometry merging.

-

Resize Handler: Write pseudocode for a window resize handler that correctly updates the camera aspect ratio, projection matrix, and renderer size. Explain what visual artifact occurs if you update the renderer size but forget to update the camera aspect ratio.

Solutions to Homework/Exercises

-

Your diagram should show: JS Objects (Scene, Camera, Renderer) -> Scene Graph Traversal -> Frustum Culling -> Object Sorting -> GPU State Setup (per object) -> Draw Call -> Vertex Shader (per vertex) -> Triangle Assembly -> Rasterization -> Fragment Shader (per fragment) -> Depth Test -> Framebuffer -> Canvas Display. The CPU-GPU handoff occurs at the draw call. Data flowing: vertices/attributes to vertex shader, varying values to fragment shader, fragment colors to depth test, final colors to framebuffer.

-

Draw calls: 5 cubes with same geo+material = 1 draw call (if instanced) or 5 (if separate meshes). 3 spheres with different materials = 3 draw calls (cannot batch different materials). 1 plane with shared material but different geometry = 1 draw call. Without instancing: 5 + 3 + 1 = 9 draw calls. With InstancedMesh for the 5 cubes: 1 + 3 + 1 = 5 draw calls. Merging the 5 cubes + plane (same material) into one geometry: 1 + 3 = 4 draw calls.

-

Pseudocode:

function onWindowResize(): camera.aspect = window.innerWidth / window.innerHeight camera.updateProjectionMatrix() renderer.setSize(window.innerWidth, window.innerHeight)If you forget

camera.updateProjectionMatrix(), the projection matrix uses stale aspect ratio values, causing the scene to appear stretched horizontally or vertically after resize. If you forget to updatecamera.aspect, the same stretching occurs.

Chapter 2: Geometries and Meshes

Fundamentals

Geometry is the skeleton of every 3D object – it defines the shape through vertices (points in 3D space), edges, and faces (triangles). A Mesh is the combination of geometry and material: the geometry says “what shape” and the material says “what it looks like.” In Three.js, all geometry is stored as BufferGeometry, which packs vertex data into flat typed arrays (Float32Array) for efficient GPU transfer. Every vertex carries attributes: at minimum a position (x, y, z), but typically also a normal vector (for lighting), UV coordinates (for texturing), and optionally vertex colors or custom data. The GPU renders everything as triangles – even a “sphere” is just many small triangles approximating a curved surface. The number of triangles (controlled by segment parameters) determines both visual quality and rendering cost. Three.js provides over 15 built-in geometries (box, sphere, plane, torus, cylinder, etc.) with sensible defaults, but you can also create completely custom geometry by defining your own vertex arrays. Understanding how vertices, normals, UVs, and indices work at the buffer level is essential for advanced effects like terrain generation, procedural modeling, and custom deformations.

Deep Dive

Geometry in Three.js is fundamentally about storing and organizing vertex data in a format the GPU can process efficiently. Let us examine each layer of this system.

BufferGeometry: The Foundation. Since Three.js r125, BufferGeometry is the only geometry class. The older Geometry class (which used JavaScript objects for vertices and faces) was removed because it was slow – every frame, Geometry had to be converted to typed arrays for the GPU. BufferGeometry stores data directly in typed arrays, eliminating this conversion overhead.

A BufferGeometry contains a collection of BufferAttributes. Each attribute is a flat typed array with an associated item size. For example, the position attribute uses item size 3 (x, y, z per vertex), the uv attribute uses item size 2 (u, v per vertex), and the normal attribute uses item size 3 (nx, ny, nz per vertex).

// Conceptual view of a triangle's buffer data:

positions: [x0, y0, z0, x1, y1, z1, x2, y2, z2] // 3 vertices * 3 floats

normals: [nx0,ny0,nz0, nx1,ny1,nz1, nx2,ny2,nz2] // 3 normals * 3 floats

uvs: [u0, v0, u1, v1, u2, v2] // 3 UVs * 2 floats

Vertices and Triangles. The GPU only understands triangles. A cube has 6 faces, but each face is made of 2 triangles, so a cube has 12 triangles and needs at least 8 unique vertex positions (but actually 24 vertices in practice because each face needs its own normals and UVs). A sphere with 32 width segments and 16 height segments has approximately 32 * 16 * 2 = 1,024 triangles. More segments mean a smoother appearance but higher rendering cost.

Normals. A normal is a unit vector perpendicular to a surface, pointing outward. Normals are critical for lighting calculations – the dot product of the normal and the light direction determines how bright a surface appears. There are two types:

- Face normals: One normal per triangle. Produces flat shading where each face has uniform brightness.

- Vertex normals: One normal per vertex, averaged from the surrounding faces. Produces smooth shading where light varies continuously across surfaces.

Three.js’s computeVertexNormals() calculates smooth normals automatically by averaging the face normals of all triangles sharing each vertex. For flat shading, set material.flatShading = true, which uses face normals instead.

UV Coordinates. UVs are 2D coordinates that map a flat texture image onto 3D geometry. The U axis corresponds to horizontal and V to vertical, with (0, 0) at the bottom-left and (1, 1) at the top-right of the texture. Every built-in Three.js geometry comes with pre-calculated UV coordinates. For a PlaneGeometry, the mapping is straightforward (the texture maps directly onto the flat surface). For a SphereGeometry, the mapping wraps the texture around the sphere like wrapping a rectangular label around a ball – which inevitably causes distortion at the poles (texture pinching).

When creating custom geometry, you must define UVs manually if you want textures to display correctly. This is one of the trickier aspects of custom geometry and is usually done in 3D modeling software (like Blender) where UV unwrapping tools help you lay out the 3D surface onto a flat 2D space.

Indexed vs Non-Indexed Geometry. Consider a cube: its 8 corner positions are shared by 3 faces each. In non-indexed geometry, every triangle stores its own 3 vertices, duplicating shared positions. A cube needs 36 vertices (12 triangles * 3 vertices). In indexed geometry, unique vertices are stored once, and an index array specifies which vertices form each triangle. A cube needs only 24 unique vertices (8 positions * 3 because each position has different normals/UVs per face) plus an index array.

Indexed geometry uses less memory and can be faster because the GPU caches recently transformed vertices (the post-transform vertex cache). If the same vertex index appears in multiple triangles, the cached result is reused instead of re-running the vertex shader.

Most built-in Three.js geometries are indexed. Custom geometry can be either, but indexed is generally preferred for efficiency.

Built-In Geometries. Three.js provides an extensive library of parametric geometries. The most commonly used are:

- BoxGeometry(width, height, depth, wSegs, hSegs, dSegs): Rectangular box. Segments control subdivision per axis.

- SphereGeometry(radius, wSegs, hSegs): UV sphere. Higher segments = smoother. Default 32x16 is usually sufficient.

- PlaneGeometry(width, height, wSegs, hSegs): Flat rectangle. Subdivide for displacement maps or terrain.

- CylinderGeometry(rTop, rBottom, height, radSegs, hSegs): Cylinder or cone (set rTop or rBottom to 0).

- TorusGeometry(radius, tube, radSegs, tubularSegs): Donut shape.

- TorusKnotGeometry(radius, tube, tubularSegs, radSegs, p, q): Mathematical knot. Great for testing materials.

More specialized geometries include LatheGeometry (surfaces of revolution), ExtrudeGeometry (extrude a 2D shape into 3D), ShapeGeometry (flat 2D shapes), and TubeGeometry (tubes along curves). Each accepts parameters that control resolution and shape.

Custom BufferGeometry. Creating geometry from scratch means defining typed arrays and setting them as attributes. The process is:

- Create a new BufferGeometry

- Define a Float32Array with vertex positions (3 floats per vertex)

- Set the position attribute:

geometry.setAttribute('position', new BufferAttribute(array, 3)) - Optionally define normals, UVs, colors, and indices

- Call

geometry.computeVertexNormals()if you did not define normals manually - Use

geometry.setIndex(new BufferAttribute(indexArray, 1))for indexed geometry

Custom geometry is essential for procedural generation (terrain from heightmaps, L-system trees, parametric surfaces) and for any shape that cannot be composed from built-in primitives.

Dynamic Geometry. You can modify geometry after creation by accessing its attributes and updating values. After modifying a position array, set geometry.attributes.position.needsUpdate = true to tell Three.js to re-upload the data to the GPU. This is how vertex displacement animations work – you change vertex positions every frame in JavaScript and flag the attribute for update. However, this CPU-side approach is slower than GPU-side animation (via vertex shaders) for large vertex counts.

How This Fits in Projects

Project 1 (Spinning Geometry Gallery) uses built-in geometries extensively, cycling through different shapes. Project 2 (Solar System Orrery) creates spheres for planets with varying segment counts. Project 9 (Procedural Terrain) creates custom BufferGeometry with displacement-based vertex positions. Understanding how vertex data flows from typed arrays to the GPU is essential for all three.

Definitions & Key Terms

- BufferGeometry: Three.js geometry class that stores vertex data in GPU-efficient typed arrays (Float32Array)

- BufferAttribute: A typed array associated with a geometry, storing per-vertex data with a defined item size

- Mesh: An Object3D combining a geometry (shape) and material (appearance) into a renderable object

- Vertex: A point in 3D space defined by (x, y, z) coordinates; the building block of all geometry

- Face/Triangle: The fundamental rendering primitive; three vertices form one triangle

- Normal: A unit vector perpendicular to a surface, used for lighting calculations

- UV Coordinates: 2D coordinates (0-1 range) mapping texture pixels to geometry vertices

- Index Array: An optional array specifying which vertices form each triangle, enabling vertex sharing

- Segments: Parameters controlling how many subdivisions a built-in geometry has; more segments = smoother but more costly

- computeVertexNormals(): Method that automatically calculates smooth normals by averaging face normals at shared vertices

Mental Model Diagram

GEOMETRY: FROM VERTICES TO GPU

================================

What You Define (JavaScript) What the GPU Receives

================================ =====================

Float32Array (positions): Vertex Buffer Object (VBO):

[x0,y0,z0, x1,y1,z1, x2,y2,z2] --> GPU memory (position attribute)

Float32Array (normals): Vertex Buffer Object (VBO):

[nx,ny,nz, nx,ny,nz, nx,ny,nz] --> GPU memory (normal attribute)

Float32Array (uvs): Vertex Buffer Object (VBO):

[u0,v0, u1,v1, u2,v2] --> GPU memory (uv attribute)

Uint16Array (indices): Index Buffer Object (IBO):

[0, 1, 2, 0, 2, 3] --> GPU memory (element indices)

A SINGLE TRIANGLE

==================

v2 (x2,y2,z2)

/\

/ \

/ \ Normal (nx,ny,nz)

/ \ points OUT of screen

/________\ toward you

v0 v1

(x0,y0,z0) (x1,y1,z1)

INDEXED vs NON-INDEXED (Quad Example)

======================================

Non-Indexed (6 vertices): Indexed (4 vertices + indices):

Triangle 1: v0, v1, v2 Vertices: v0, v1, v2, v3

Triangle 2: v0, v2, v3 Indices: [0,1,2, 0,2,3]

v3----v2 v3----v2 v3----v2

| / | | / | | / |

| / | = | T2 | = | / |

| / | | / T1| | / |

v0----v1 v0----v1 v0----v1

Memory: 6*3 = 18 floats Memory: 4*3 = 12 floats + 6 indices

(duplicates v0, v2) (shared vertices, more efficient)

MESH = GEOMETRY + MATERIAL

===========================

+-------------------+ +-----------------+

| BufferGeometry | | Material |

| (shape data) | | (appearance) |

| - positions | | - color |

| - normals | + | - roughness | = Mesh (renderable)

| - uvs | | - metalness |

| - indices | | - map (texture) |

+-------------------+ +-----------------+

SPHERE SEGMENT COUNT COMPARISON

================================

Low (8x4): Medium (16x8): High (32x16):

____ ____ ____

/ \ / \ / \

| /\ | | | | |

| / \ | | | | |

\____/ \____/ \____/

64 tris 256 tris 1024 tris

Faceted look Decent Smooth

How It Works

Step-by-step process for creating and rendering geometry:

- Define vertex data – create Float32Array with positions (3 floats per vertex), normals, UVs

- Create BufferGeometry – instantiate and attach attributes via

setAttribute() - Optional: set indices – define an index array for vertex sharing between triangles

- Compute normals – call

computeVertexNormals()if normals were not defined manually - Create Material – choose or configure a material (color, textures, PBR properties)

- Create Mesh – combine geometry and material into a Mesh object

- Add to scene –

scene.add(mesh)places it in the scene graph - Renderer processes Mesh – during render, the geometry’s buffer data is uploaded to GPU VBOs

- Vertex shader reads attributes – accesses position, normal, uv via the attribute qualifier

- Triangles are rasterized – GPU converts indexed vertices into screen-space fragments

Invariants:

- Every geometry must have at least the

positionattribute - Normals must be defined (manually or via

computeVertexNormals()) for lighting to work - UVs must be defined for textures to map correctly

- Typed arrays must have the correct length (vertices * item_size)

needsUpdate = truemust be set after modifying attribute data

Failure Modes:

- No geometry visible: forgot to add mesh to scene, or mesh is at origin and camera is looking elsewhere

- Black triangles with no shading: normals are missing or incorrect

- Texture appears stretched or wrong: UV coordinates are missing or incorrect

- Memory errors: typed array size does not match vertex count * item size

Minimal Concrete Example

// Pseudocode: Create a custom triangle from scratch

// Define 3 vertices (a triangle)

positions = new Float32Array([

-1.0, 0.0, 0.0, // vertex 0 (left)

1.0, 0.0, 0.0, // vertex 1 (right)

0.0, 1.5, 0.0 // vertex 2 (top)

])

// Define UV coordinates for texturing

uvs = new Float32Array([

0.0, 0.0, // vertex 0 -> bottom-left of texture

1.0, 0.0, // vertex 1 -> bottom-right of texture

0.5, 1.0 // vertex 2 -> top-center of texture

])

// Create geometry and set attributes

geometry = new BufferGeometry()

geometry.setAttribute('position', new BufferAttribute(positions, 3))

geometry.setAttribute('uv', new BufferAttribute(uvs, 2))

geometry.computeVertexNormals() // auto-calculate normals

// Create mesh and add to scene

material = new MeshStandardMaterial({ color: 0xff6600 })

triangle = new Mesh(geometry, material)

scene.add(triangle)

Common Misconceptions

- “More segments always means better quality”: Beyond a threshold determined by viewing distance and screen resolution, additional segments add GPU cost with zero visible improvement. A sphere at 100 pixels on screen looks identical at 32 segments vs 128 segments, but the latter uses 16 times more triangles.

- “You must manually define normals for every geometry”:

computeVertexNormals()handles the vast majority of cases. Only define normals manually when you need flat shading on specific faces, custom lighting tricks, or when importing geometry without normals. - “BoxGeometry creates exactly 8 vertices”: A box has 8 corner positions but actually creates 24 vertices. Each corner appears in 3 faces with different normals and UVs, so each corner position is duplicated 3 times with different attribute values.

- “You can share a geometry between meshes and they will be independent”: Geometry IS shared – if you modify the buffer data, ALL meshes using that geometry are affected. This is actually useful for instancing but surprising if unintended. Clone the geometry with

geometry.clone()if you need independent copies.

Check-Your-Understanding Questions

- Why does BufferGeometry use Float32Array instead of regular JavaScript arrays?

- What is the difference between indexed and non-indexed geometry, and when would you prefer one over the other?

- If a PlaneGeometry has 10 width segments and 10 height segments, how many triangles does it contain?

- What happens if you modify a geometry’s position attribute but forget to set

needsUpdate = true? - Why does a cube need 24 vertices instead of 8, even though it only has 8 corner positions?

Check-Your-Understanding Answers

- Float32Array is a typed array that maps directly to GPU memory format. Regular JavaScript arrays would need to be converted to typed arrays every frame, adding significant overhead. Float32Array enables zero-copy transfer to GPU vertex buffer objects (VBOs).

- Indexed geometry stores unique vertices once and uses an index array to reference them, saving memory and enabling GPU vertex caching. Non-indexed geometry duplicates vertices at shared edges. Prefer indexed for most cases (efficiency). Non-indexed is simpler for procedural generation where vertices are not shared (e.g., each triangle has unique normals for flat shading).

- A 10x10 segment plane has 10 * 10 = 100 quads. Each quad is 2 triangles. Total: 200 triangles. Vertices: (10+1) * (10+1) = 121 unique positions.

- The GPU retains the old data in its buffer. Your modifications exist only in the JavaScript typed array but are never uploaded to the GPU. The mesh renders with the old, unmodified geometry.

- Each corner of a cube is shared by 3 faces. Each face needs a different normal vector (pointing outward from that face) and different UV coordinates (mapping the texture to that face). Since normals and UVs are per-vertex attributes, each corner must be duplicated 3 times with different normal/UV values. 8 corners * 3 = 24 vertices.

Real-World Applications

- Procedural terrain: Custom BufferGeometry with vertex positions derived from heightmap data or noise functions

- Data visualization: Creating geometry programmatically to represent data points as 3D shapes

- 3D modeling tools: Manipulating individual vertices for mesh editing

- Game environments: Combining built-in geometries for level construction

- Scientific visualization: Custom geometry for molecular models, fluid surfaces, or medical imaging data

Where You Will Apply It

- Project 1 (Spinning Geometry Gallery): Explore all built-in geometry types, compare visual quality vs segment count

- Project 2 (Solar System Orrery): Create spheres for planets and rings using TorusGeometry or custom ring shapes

- Project 9 (Procedural Terrain): Build custom BufferGeometry from scratch with displacement-based vertex positions

References

- Three.js BufferGeometry documentation: https://threejs.org/docs/#api/en/core/BufferGeometry

- Discover Three.js - Built-in Geometries: https://discoverthreejs.com/book/first-steps/built-in-geometries/

- Three.js Fundamentals - Custom BufferGeometry: https://threejsfundamentals.org/threejs/lessons/threejs-custom-buffergeometry.html

- “Real-Time Rendering” by Akenine-Moller, Haines, Hoffman - Ch. 16 (Polygonal Techniques)

- “Interactive Computer Graphics” by Edward Angel - Ch. 6 (Geometric Objects and Transformations)

Key Insight

Geometry is just organized arrays of numbers – positions, normals, UVs stored in typed arrays – and the entire visual complexity of a 3D scene comes from how the GPU’s vertex and fragment shaders interpret these numbers in combination with materials and lighting.

Summary

BufferGeometry stores vertex data (positions, normals, UVs) in GPU-efficient typed arrays, and a Mesh combines this geometry with a material to create a renderable object. Three.js provides over 15 built-in parametric geometries, but custom BufferGeometry enables procedural shapes, terrain, and data-driven visualization. The key concepts are vertex attributes (what data each vertex carries), indexed vs non-indexed storage (efficiency tradeoff), and segment count (quality vs performance tradeoff). Every 3D object you see on screen is ultimately just triangles defined by these vertex buffers.

Homework/Exercises

-

Segment Count Experiment: Using pseudocode, describe how you would create a SphereGeometry at three different segment counts (8x4, 32x16, 128x64) and visually compare them. What would you expect to see at each level? At what segment count does the sphere appear “smooth enough” on a typical desktop screen?

-

Custom Quad: Write pseudocode to create a custom BufferGeometry for a single quad (two triangles forming a rectangle). Define positions, normals (pointing toward +Z), and UV coordinates. Use indexed geometry to share the 4 corner vertices between the 2 triangles.

-

Vertex Count Calculation: A TorusKnotGeometry with 128 tubular segments and 16 radial segments creates how many triangles? How many unique vertices in indexed form? Show your calculation.

Solutions to Homework/Exercises

-

At 8x4 (64 triangles): clearly faceted, you can see individual triangle edges, looks like a low-poly gem. At 32x16 (1024 triangles): appears smooth at typical viewing distances, you would need to look closely to see facets. At 128x64 (16384 triangles): perfectly smooth, indistinguishable from 32x16 at normal viewing distance. The “sweet spot” is typically 32x16 for most desktop applications – beyond that, the visual improvement is negligible but the triangle count increases quadratically.

-

Pseudocode for indexed quad: ``` positions = Float32Array([ -1, -1, 0, // v0 (bottom-left) 1, -1, 0, // v1 (bottom-right) 1, 1, 0, // v2 (top-right) -1, 1, 0 // v3 (top-left) ]) normals = Float32Array([ 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1 // all point toward +Z ]) uvs = Float32Array([ 0, 0, 1, 0, 1, 1, 0, 1 // standard UV mapping ]) indices = Uint16Array([0, 1, 2, 0, 2, 3]) // two triangles sharing v0 and v2

geometry = new BufferGeometry() geometry.setAttribute(‘position’, new BufferAttribute(positions, 3)) geometry.setAttribute(‘normal’, new BufferAttribute(normals, 3)) geometry.setAttribute(‘uv’, new BufferAttribute(uvs, 2)) geometry.setIndex(new BufferAttribute(indices, 1))

3. A TorusKnotGeometry with P tubular segments and Q radial segments creates P * Q * 2 triangles. With P=128, Q=16: 128 * 16 * 2 = 4,096 triangles. Unique vertices in indexed form: (128+1) * (16+1) = 129 * 17 = 2,193 vertices (the +1 accounts for the seam where the geometry wraps around, though the torus knot may close differently -- the exact count depends on the p,q knot parameters and whether the geometry closes perfectly).

---

### Chapter 3: Materials and Physically Based Rendering (PBR)

**Fundamentals**

Materials define the visual appearance of surfaces -- how they interact with light, what color they are, whether they are transparent, and how they reflect the environment. Three.js provides a spectrum of material types from the simplest unlit MeshBasicMaterial to the most physically accurate MeshPhysicalMaterial. At the heart of modern 3D rendering lies Physically Based Rendering (PBR), a shading model that simulates how light behaves in the real world using principles of energy conservation and microfacet theory. PBR materials are defined primarily by two properties: roughness (how diffuse or sharp reflections are) and metalness (whether the surface behaves like a metal or a dielectric). These two parameters, combined with a base color (albedo), can reproduce virtually any real-world material -- from rough concrete to polished chrome, from matte wood to glossy plastic. Three.js implements PBR through MeshStandardMaterial (the workhorse for 90% of use cases) and MeshPhysicalMaterial (an extension adding clearcoat, transmission, sheen, and iridescence for specialized surfaces like glass, car paint, and fabric). Choosing the right material type is both an artistic and performance decision: simpler materials render faster but look less realistic.

**Deep Dive**

Understanding materials requires understanding how light interacts with surfaces at a physical level, and how Three.js approximates these interactions at different levels of fidelity.

**The Material Performance Hierarchy.** Three.js materials form a clear hierarchy from fastest to most expensive:

1. **MeshBasicMaterial** -- No lighting calculations at all. The surface displays its color or texture at full brightness regardless of lights in the scene. Use for UI elements, skyboxes, wireframes, or stylized unlit effects. This is the fastest material because the fragment shader simply outputs the base color.

2. **MeshLambertMaterial** -- Diffuse-only lighting using the Lambertian reflectance model. Light scatters equally in all directions from the surface. No specular highlights (no shiny spots). Lighting is calculated per-vertex (not per-pixel), making it cheaper but less smooth on low-poly geometry. Ideal for matte surfaces, low-poly art styles, and mobile applications where performance matters.

3. **MeshPhongMaterial** -- Adds specular highlights to Lambert's diffuse lighting using the Blinn-Phong model. The `shininess` property controls how tight the specular highlight is (higher = shinier). Lighting is calculated per-pixel for smoother results. This was the standard for "shiny" objects before PBR but produces less realistic results than StandardMaterial.

4. **MeshStandardMaterial** -- Full PBR using the Cook-Torrance microfacet model. Defines surfaces with `roughness` (0 = mirror, 1 = fully diffuse) and `metalness` (0 = non-metal, 1 = metal). Energy-conserving (diffuse + specular light never exceeds incoming light). This is the recommended material for realistic rendering and covers the vast majority of real-world surfaces.

5. **MeshPhysicalMaterial** -- Extends StandardMaterial with advanced properties: clearcoat (a second reflective layer like car paint or lacquer), transmission (light passing through like glass), sheen (soft glow for fabrics), iridescence (thin-film interference for soap bubbles or oil slicks), and IOR (index of refraction for bending light). Significantly more expensive per pixel but necessary for certain surface types.

**PBR: Roughness and Metalness.** The genius of PBR is that two properties capture the essential visual character of almost any material:

- **Roughness** controls the spread of reflections. At roughness 0, the surface is a perfect mirror -- light reflects in a single direction. At roughness 1, light scatters in all directions equally (fully diffuse). In between, reflections become progressively blurrier. Physically, roughness models the microscopic surface irregularities (microfacets) that scatter light.

- **Metalness** determines the fundamental behavior of light interaction. Non-metals (metalness 0) reflect a small amount of white light at glancing angles (Fresnel effect) and show their base color through diffuse reflection. Metals (metalness 1) reflect their base color as specular reflection and have no diffuse component -- a gold surface reflects gold-colored light. In the real world, there are no surfaces between metal and non-metal (it is a binary property), but intermediate values are useful for worn or dirty edges where metal shows through non-metallic coatings.

**Energy Conservation.** PBR materials enforce energy conservation: the total light leaving a surface can never exceed the light arriving. If a surface has strong specular reflections, the diffuse component is automatically reduced. This is why PBR materials look right even without careful tuning -- the physics prevent unrealistic combinations.

**Texture Maps for PBR.** Real materials rarely have uniform properties across their entire surface. Texture maps allow per-pixel variation:

- **map** (albedo/color): The base color of the surface

- **normalMap**: Encodes surface detail as normal deviations, creating the illusion of bumps and scratches without extra geometry

- **roughnessMap**: Per-pixel roughness values (grayscale, where white = rough, black = smooth)

- **metalnessMap**: Per-pixel metalness values (grayscale, where white = metal, black = non-metal)

- **aoMap**: Ambient occlusion -- darkens crevices and contact areas for subtle shadowing

- **emissiveMap**: Per-pixel self-illumination (for glowing screens, LEDs, lava)

- **displacementMap**: Actually moves vertices along their normals (requires subdivided geometry)

- **envMap**: Environment map for reflections (cubemap or equirectangular)

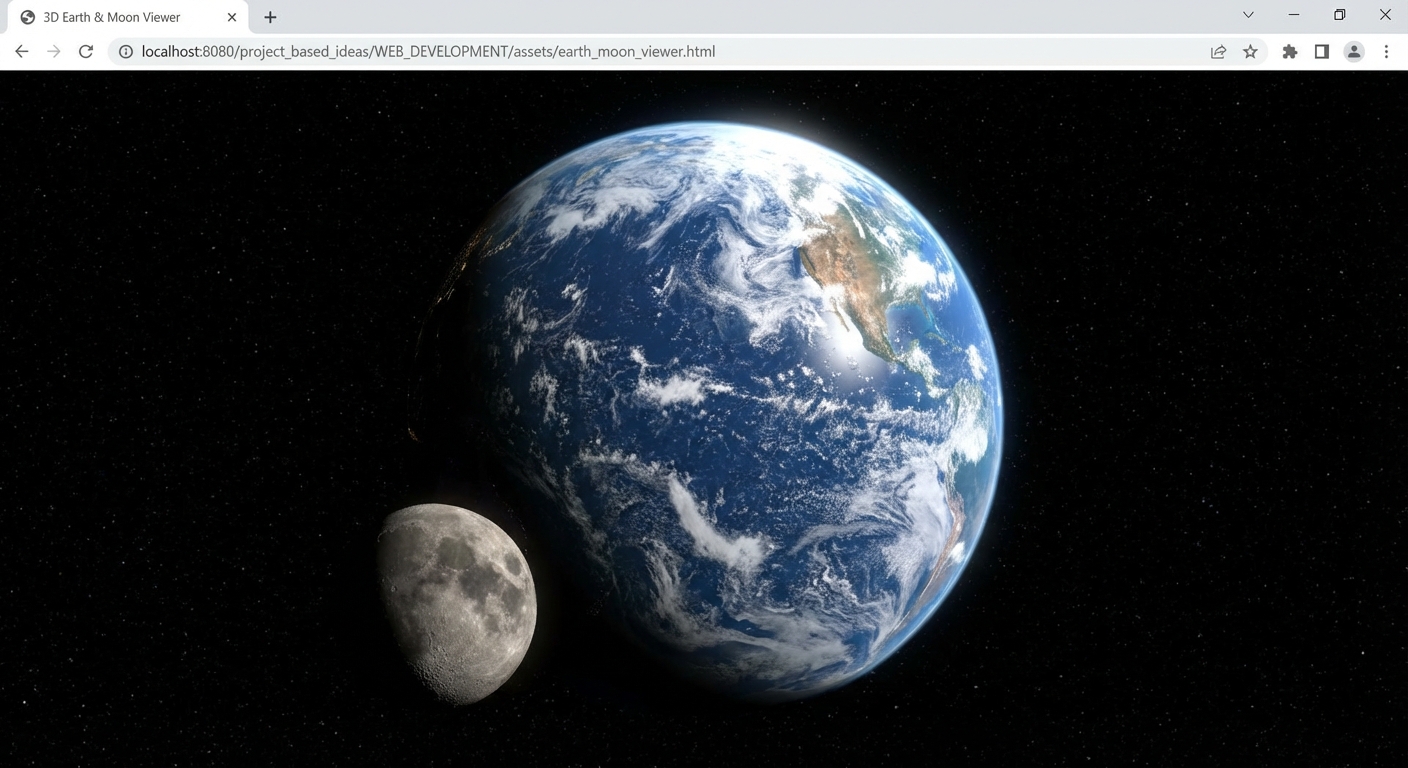

**Environment Maps and Reflections.** For PBR to look truly realistic, the material needs something to reflect. Without an environment map, metallic surfaces appear black (nothing to reflect). Environment maps capture the surrounding environment as a texture that is "seen" in reflections. The simplest approach is to use an HDR equirectangular image loaded with RGBELoader and set as `scene.environment`. This automatically provides reflections and image-based lighting for all PBR materials in the scene.

**MeshToonMaterial.** For non-photorealistic rendering (NPR), MeshToonMaterial provides cel-shading (flat bands of light/shadow like a cartoon). It uses a gradient map to define how light bands transition. This is stylistically different from PBR but very popular for games and animated content.

**ShaderMaterial and RawShaderMaterial.** When built-in materials cannot achieve your vision, custom shaders give full control. ShaderMaterial accepts custom vertex and fragment GLSL code while providing Three.js's built-in uniforms (matrices, camera position). RawShaderMaterial provides no built-ins -- you declare everything yourself. These are covered in depth in Chapter 12 (Shaders and GLSL).

**How This Fits in Projects**

Project 1 (Spinning Geometry Gallery) cycles through different material types to compare their visual impact. Project 3 (Material Showcase) focuses entirely on PBR, exploring roughness/metalness combinations with texture maps. Project 5 (Product Configurator) uses PBR materials with environment maps for realistic product rendering. Project 8 (Shader Effects) extends beyond built-in materials into custom ShaderMaterial.

**Definitions & Key Terms**

- **PBR (Physically Based Rendering)**: A rendering approach simulating real-world light behavior using energy conservation and microfacet theory

- **Roughness**: Controls reflection sharpness; 0 = mirror-like, 1 = fully diffuse (opposite of shininess)

- **Metalness**: Determines if a surface behaves as metal (1) or dielectric (0); controls whether reflections are tinted by base color

- **Albedo**: The base color of a material, separate from lighting effects

- **Energy Conservation**: PBR principle that total reflected light cannot exceed incoming light

- **Microfacet Model**: Theory that surfaces are composed of tiny mirror-like facets oriented at different angles, explaining roughness

- **Fresnel Effect**: Surfaces reflect more light at glancing angles; visible as rim highlights on non-metallic surfaces

- **Emissive**: Self-illumination independent of scene lights; the surface appears to glow

- **Clearcoat**: An additional transparent reflective layer on top of the base material (MeshPhysicalMaterial only)

- **Transmission**: How much light passes through a material; used for glass, water, and translucent surfaces

- **IOR (Index of Refraction)**: How much light bends when entering a transparent material; glass is approximately 1.5

- **envMap**: Environment texture providing reflections and ambient lighting for PBR surfaces

**Mental Model Diagram**

MATERIAL PERFORMANCE HIERARCHY ================================

Fastest Most Realistic | | v v